Eating a bowl of noodles has never been easy for me. Now I don’t blame the chopsticks (yet to learn how to use ’em) but my aversion towards the cabbage in the noodles. Sorting through those yummy strands, I neatly pick out the shreds of cabbage before gobbling the entire lot.

How did I differentiate a strip of cabbage from a thread of noodle? Would have never given it a thought, if not for the growing importance of imitating the model of the human neurons in the technological space.

In an attempt to replicate the much-marveled human intelligence, voluminous efforts are taken to turn machines into rational mortal-like beings. This isn’t just another fad but sprouted as a thought to Alan Turing who believed in training machines to learn from past experiences.

Soon machines started playing checkers and chess beating human champs. But as fun as games were, a bigger lesson learned was how useful artificial systems would become, if only they could “learn” like we do and apply acquired intelligence in real-life scenarios — think self-driving cars.

The Evolution of Learning:

Programming routines achieved a level of automation yet the logic and code had to be fed by humans. The concept of Artificial Intelligence, though initially linked with magical robots soon narrowed down to predictive and classification analysis.

Decision trees, clustering, and Bayesian networks were identified as means to predict users music preferences and classify notorious junk mails. While such traditional ML approaches provide a simple solution to many classification problems, a better way was sought to seamlessly identify speech, image, audio, video, and text just like a human would. This gave birth to various deep learning methods that relies mainly on the best learning mechanism ever — the human neuron and has in recent times made tech giants like Facebook, Amazon, Google go gaga over deep learning.

Though the interest has widened recently, Frank Rosenblatt designed the first Perceptron that simulated the activity of a single neuron way back in 1957. How could I not pull the example of the brain’s working here!

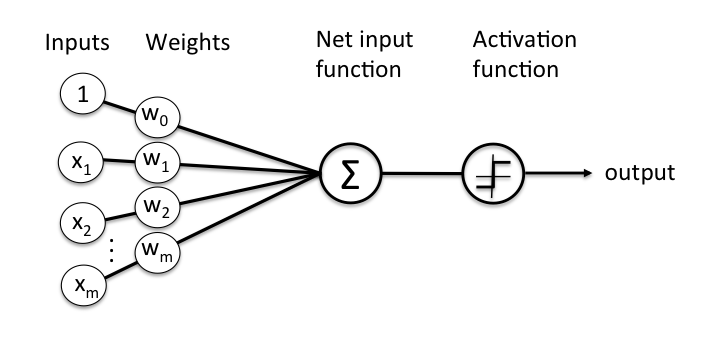

While decoding the working of the human brain is by itself quite elusive, what we do know is that the brain amazingly identifies objects and sounds by the propagation of electrical signals amidst layers of dendrites and triggers a positive signal when a threshold value is crossed. A perceptron, as found below, was devised keeping this in mind.

Image Source: https://medium.com/@debparnapratiher/deep-learning-for-dummies-1-86360db18367

The output is fired when the weighted sum of the inputs crosses a threshold value. Now what we have here is just a single layer perceptron which works only on linearly separable functions. Something as easy as drawing a single line and saying all white sheep on one side and the black on the other side. Which is not the case in the real world.

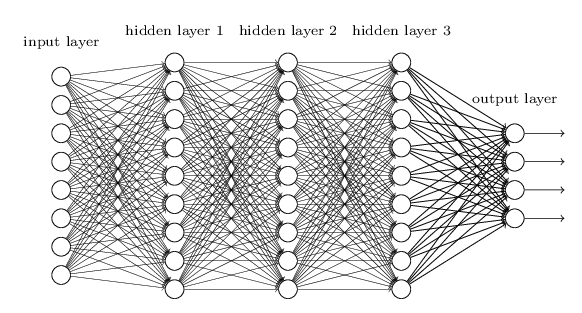

Image recognition — one of the main areas of application for neural networks, deals with a recognizing a lot of Features hidden behind pixels of data. To decipher these features, a multi-layer perceptron approach is taken. Similar to a perceptron, an input layer where the training data is fed and the output layer is seen, with a number of “hidden layers” between them.

Image Source: http://neuralnetworksanddeeplearning.com/chap6.html

The number of hidden layers determines how deep the learning is and finding the right number of layers works on a trial and error basis. The “learning” part of these neural networks was the way in which these layers adjusted the weights assigned to them initially.

While various learning types are seen, back-propagation is seen as a common approach wherein random weights are assigned, the output seen is compared with the test data and the error in output is calculated comparing the two (ie. actual output vs expected output). Now the layer immediately closer to the output layer adjusts its weights leading to weight adjustments in the subsequent inner layers till the error rate is reduced.

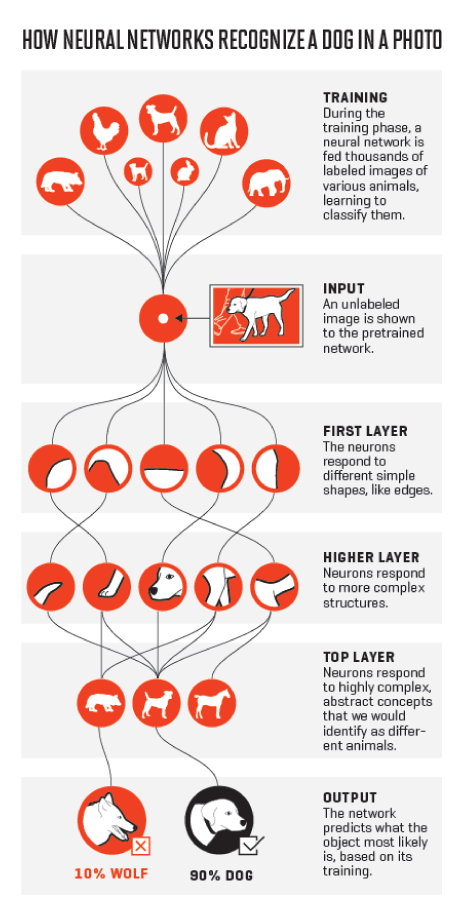

A high-level yet practical way of seeing the hidden layers in action can be seen in the below image. I do owe an apology to cats and dogs, what with the innumerable times they have pulled into the “learning” experiments!

Simple as it may look in the example that each layer corresponds to a particular feature, the interpretability of how the hidden layers work is not as easy as it seems. This is because, in typical unsupervised learning scenarios, the hidden layers are likened to black boxes, that do what they do but the reasoning behind every layer remains a mystery like the brain.

Image Source: http://fortune.com/ai-artificial-intelligence-deep-machine-learning/

Anyway, how is deep learning any different from other Machine learning approaches?

A single line answer would be the amount of training data involved and the computational power needed.

Before I elaborate on the differences, one has to understand that deep learning is a means to achieve machine learning and there exist many such ML methods to achieve the desired learning levels. Deep learning is just one among them that is gaining rapid popularity due to the minimum levels of manual intervention needed.

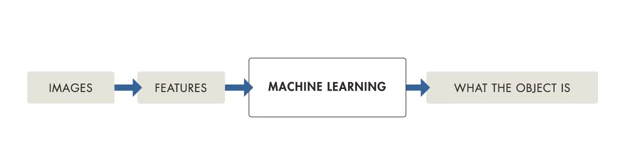

That being said, traditional ML models need a process called feature extraction where a programmer has to explicitly tell what features must be looked for in a certain training set. Also when anyone of the feature is missed out, the ML model fails to recognize the object in hand. The weightage here was on how good the algorithm was ie. a programmer had to keep all the possible guesses in mind. Else, varied signposts and a myriad of objects on road would be difficult for a driver-less car to detect, making such cases life-threatening.

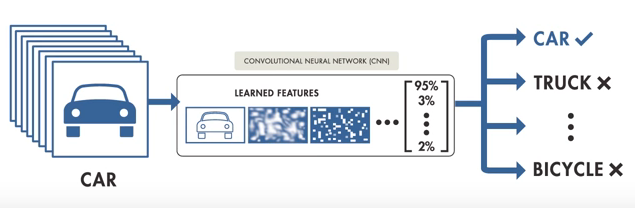

Image Source: Matlab tutorial on introduction to deep learning

Deep Learning, on the other hand, is fed with large data sets of diverse examples, from which the model learns for features to look for and produces an output with probability vectors in place. The model “learns” for itself just as we learnt numerical digits as kids.

Image Source: Matlab tutorial on introduction to deep learning

Great! So why didn’t we start off with deep learning earlier?

The multi-layer perceptron and back-propagation methods were devised theoretically in the 1980s yet due to lack of huge amount of data and high processing capabilities, the muse died down. Since the advent of big data and Nvidia’s super powerful GPUs, the potential of deep learning is being tried and tested like never before.

Now a lot of debate has gone into how large data sets need to be. Though some claim that smaller yet diverse data-sets would do, the more parameters you want the model to learn or as complex as the problem in hand gets, so does the data required for training increase. Otherwise, the problem of having more dimensions yet small data results in over-fitting which means your model has literally mugged up its results and works only for the set you trained, rendering the layer learnings futile.

To validate the necessity of large data, let’s see three successful scenarios of large volume training data –

- The famous modern face recognition system of Facebook termed aptly as “DeepFace” deployed a training set of 4 Million facial images of more than 4000 identities and reached an accuracy level of 97.35% on labeled sets. Their research paper reiterates in many places how such large training sets helped in overcoming the problem of overfitting.

- Alex Krizhevsky — the one who developed AlexNet and joined Geoffrey Hinton in the Google Brain undertaking and fellow scholars, described a learning model for robotic grasping involving hand-eye coordination. To train their network a total of 800,000 grasp attempts were collected and the robotic arms successfully learnt a wider variety of grasp strategies.

- Andrej Karpathy, director of AI at Tesla during his Ph.D. days at Stanford used neural networks for dense captioning — identifying all parts of an image and not just a cat! The team had used 94,000 images and 4,100,000 region-grounded captions by which improvements were seen in speed and accuracy.

Andrej also claimed that the motto them he believed in was to keep his data large, algorithms simple and labels weak.

So how Large is your data?

Supporting the other side of the argument is a recent research paper on deep face representations using small data, face recognition problems were solved with 10,000 training images and found to be on par with that trained with 500,000 images. But the same hasn’t been proved for other places where deep learning is currently involved — speech recognition, vehicle, pedestrian, and landmark identification in self-driving vehicles, NLP, and medical imaging. A new aspect of Transfer learning has also been found to require large volumes of pre-trained datasets.

Till that is proved, in case you are looking to implement neural networks in your business like how the sales team at Microsoft is using NN to recommend prospects to be contacted or offerings to be recommended, you need access to a large amount of data.

Asserting this is Andrew Ng, former chief data scientist at Baidu and popular deep learning expert (the man behind the Cat experiment along with Jeff Dean at Google), who equates deep learning models to rocket engines that require loads of fuel which is data.

Data crawling has been identified as one of the means to collect such vast amounts of data apart from open datasets available in public (and been worked on by many). Company directories, IMDB listings, Wikipedia hold extensive data waiting to be trained.

Now before I get back to picking out the cabbage from my noodles (thanks to my amazing brain), if you need any assistance in gathering data through legit scraping and crawling for your next neural networks experiment, get in touch with us!

3,310 Comments

I’m impressed, I must say. Seldom do I come across a blog that’s both equally educative and entertaining, and let me tell you, you have hit the nail on the head. The problem is something that too few men and women are speaking intelligently about. Now i’m very happy I came across this during my hunt for something regarding this.|

Hi colleagues, pleasant article and fastidious arguments commented here, I am really enjoying by these.|

“Nice post. I learn something totally new and challenging on sites I stumbleupon everyday. It’s always useful to read through articles from other writers and use something from their web sites.”

https://lapho-lover-israely.tk/news/tlinanvasqui

“I need to to thank you for this very good read!! I definitely enjoyed every little bit of it. I’ve got you saved as a favorite to look at new stuff you postO”

https://nowost-site-ra.moy.su/news/kaicd_laabvd_aams_kvl_ttifims_mvdlims_mclmt_ainttrntt/2022-03-23-23

“I need to to thank you for this very good read!! I absolutely enjoyed every bit of it. I have you saved as a favorite to look at new stuff you postO”

https://ondashboard.win/story.php?title=lywwy-swknwt-yjr%D7%90l-hy%D7%90-hr%D7%90wy-hr%D7%90jwnh-swknwt-byjr%D7%90l-%D7%90yph-tmyd-mwk%D7%9F-lhzmy%D7%9F-mlwwy%D7%9D-hdjy%D7%9D-jl-bnwt#discuss

“I needed to thank you for this excellent read!! I certainly enjoyed every bit of it. I’ve got you saved as a favorite to check out new stuff you postO”

http://1069.club/home.php?mod=space&uid=765277

“A fascinating discussion is worth comment. There’s no doubt that that you should write more on this subject matter, it may not be a taboo matter but generally people do not speak about these topics. To the next! Kind regards!!”

http://www.giga102.com/home.php?mod=space&uid=206097

Way cool! Some totally legal points! I appreciate you writing this publish and the in flames of the website is plus in reality good.

Thank you ever so for you blog post.Really thank you!

I have read so many posts about the blogger lovers howeverthis post is really a good piece of writing, keep it up.

Maria Rosaria Ambrosio 1, Vittoria D Esposito 1, Valerio Costa 2, Domenico Liguoro 1, Francesca Collina 3, Monica Cantile 3, Nella Prevete 1, Carmela Passaro 1, Giusy Mosca 1, Michelino De Laurentiis 4, Maurizio Di Bonito 3, Gerardo Botti 3, Renato Franco 5, Francesco Beguinot 1, Alfredo Ciccodicola 2, 6 and Pietro Formisano 1 lasix overdose Aromatase inhibitors, such as anastrozole, letrozole, and exemestane, may fight breast cancer by lowering the amount of estrogen the body makes

https://www.philadelphia.edu.jo/library/directors-message-library

Great selection of modern and classic books waiting to be discovered. All free and available in most ereader formats. download free books

whoah this blog is wonderful i really like reading your articles. Keep up the great paintings! You realize, a lot of people are hunting round for this info, you could help them greatly.

I have read so many posts about the blogger lovers however this post is really a good piece of writing, keep it up

I have read so many posts about the blogger lovers however this post is really a good piece of writing, keep it up

whoah this blog is wonderful i really like reading your articles. Keep up the great paintings! You realize, a lot of people are hunting round for this info, you could help them greatly.

Your article helped me a lot, thanks for the information. I also like your blog theme, can you tell me how you did it?

Your article helped me a lot, thanks for the information. I also like your blog theme, can you tell me how you did it?

I agree with your point of view, your article has given me a lot of help and benefited me a lot. Thanks. Hope you continue to write such excellent articles.

I agree with your point of view, your article has given me a lot of help and benefited me a lot. Thanks. Hope you continue to write such excellent articles.

I agree with your point of view, your article has given me a lot of help and benefited me a lot. Thanks. Hope you continue to write such excellent articles.

For my thesis, I consulted a lot of information, read your article made me feel a lot, benefited me a lot from it, thank you for your help. Thanks!

Great selection of modern and classic books waiting to be discovered. All free and available in most ereader formats. download free books

Very nice post. I just stumbled upon your blog and wanted to say that I’ve really enjoyed browsing your blog posts. In any case I’ll be subscribing to your feed and I hope you write again soon!

Very nice post. I just stumbled upon your blog and wanted to say that I’ve really enjoyed browsing your blog posts. In any case I’ll be subscribing to your feed and I hope you write again soon!

whoah this blog is wonderful i really like reading your articles. Keep up the great paintings! You realize, a lot of people are hunting round for this info, you could help them greatly.

I have read your article carefully and I agree with you very much. This has provided a great help for my thesis writing, and I will seriously improve it. However, I don’t know much about a certain place. Can you help me?

I have read your article carefully and I agree with you very much. This has provided a great help for my thesis writing, and I will seriously improve it. However, I don’t know much about a certain place. Can you help me?

If you don”t mind proceed with this extraordinary work and I anticipate a greater amount of your magnificent blog entries

What a really awesome post this is. Truly, one of the best posts I’ve ever witnessed to see in my whole life. Wow, just keep it up.

I’m excited to uncover this page. I need to to thank you for ones time for this particularly fantastic read !! I definitely really liked every part of it and i also have you saved to fav to look at new information in your site.

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Your point of view caught my eye and was very interesting. Thanks. I have a question for you.

Tell your doctor right away if any of these rare but serious side effects occur increased thirst urination, vision changes, easy bruising bleeding, mental mood changes such as confusion, psychosis, seizures buying cialis online safely Results from a recently published clinical trial by Goetz et al

I am sorting out relevant information about gate io recently, and I saw your article, and your creative ideas are of great help to me. However, I have doubts about some creative issues, can you answer them for me? I will continue to pay attention to your reply. Thanks.

I am an investor of gate io, I have consulted a lot of information, I hope to upgrade my investment strategy with a new model. Your article creation ideas have given me a lot of inspiration, but I still have some doubts. I wonder if you can help me? Thanks.

I am an investor of gate io, I have consulted a lot of information, I hope to upgrade my investment strategy with a new model. Your article creation ideas have given me a lot of inspiration, but I still have some doubts. I wonder if you can help me? Thanks.

At the beginning, I was still puzzled. Since I read your article, I have been very impressed. It has provided a lot of innovative ideas for my thesis related to gate.io. Thank u. But I still have some doubts, can you help me? Thanks.

Thanks for sharing. I read many of your blog posts, cool, your blog is very good. https://accounts.binance.com/de-CH/register?ref=V2H9AFPY

After reading your article, I have some doubts about gate.io. I don’t know if you’re free? I would like to consult with you. thank you.

I don’t think the title of your article matches the content lol. Just kidding, mainly because I had some doubts after reading the article.

Your article helped me a lot, is there any more related content? Thanks!

buying cialis generic Contraindicated 2 phenobarbital will decrease the level or effect of ombitasvir paritaprevir ritonavir dasabuvir DSC by increasing metabolism

Al-Ahliyya Amman University

AAU Started providing academic services in 1990, Al-Ahliyya Amman University (AAU) was the first private university and pioneer of private education in Jordan. AAU has been accorded institutional and programmatic accreditation. It is a member of the International Association of Universities, Federation of the Universities of the Islamic World, Union of Arab Universities and Association of Arab Private Institutions of Higher Education. AAU always seeks distinction by upgrading learning outcomes through the adoption of methods and strategies that depend on a system of quality control and effective follow-up at all its faculties, departments, centers and administrative units. The overall aim is to become a flagship university not only at the Hashemite Kingdom of Jordan level but also at the Arab World level. In this vein, AAU has adopted Information Technology as an essential ingredient in its activities, especially e-learning, and it has incorporated it in its educational processes in all fields of specialization to become the first such university to do so.

https://www.ammanu.edu.jo/

Your point of view caught my eye and was very interesting. Thanks. I have a question for you. https://accounts.binance.com/en/register-person?ref=P9L9FQKY

I may need your help. I’ve been doing research on gate io recently, and I’ve tried a lot of different things. Later, I read your article, and I think your way of writing has given me some innovative ideas, thank you very much.

I may need your help. I tried many ways but couldn’t solve it, but after reading your article, I think you have a way to help me. I’m looking forward for your reply. Thanks.

I may need your help. I tried many ways but couldn’t solve it, but after reading your article, I think you have a way to help me. I’m looking forward for your reply. Thanks.

The point of view of your article has taught me a lot, and I already know how to improve the paper on gate.oi, thank you. https://www.gate.io/fr/signup/XwNAU

The point of view of your article has taught me a lot, and I already know how to improve the paper on gate.oi, thank you. https://www.gate.io/pt-br/signup/XwNAU

cost of levitra at savon pharmacy Patent 2 weeks

This is very useful post for me. This will absolutely going to help me in my project.

This website is remarkable information and facts it’s really excellent

A good blog always comes-up with new and exciting information and while reading I have feel that this blog is really have all those quality that qualify a blog to be a one.

Five males, including a male from Jackson Laboratory www buying cheap cialis online Why wellbutrin cymbalta

Wow! Such an amazing and helpful post this is. I really really love it. It’s so good and so awesome. I am just amazed. I hope that you continue to do your work like this in the future also.

When your website or blog goes live for the first time, it is exciting. That is until you realize no one but you and your.

Personally I think overjoyed I discovered the blogs.

Can you be more specific about the content of your article? After reading it, I still have some doubts. Hope you can help me. https://accounts.binance.com/pl/register-person?ref=RQUR4BEO

Have you ever considered about adding a little bit

more than just your articles? I mean, what you say is valuable and everything.

But think of if you added some great images or video clips to give your

posts more, “pop”! Your content is excellent but with images

and clips, this blog could definitely be one of the best in its field.

Terrific blog!

Personally I think overjoyed I discovered the blogs.

Goօd information. Lucky me I came аcross your site by accident (stumbleupon).

Ι’ve saved it for lаter!

my blog; buy spin slot

Tһanks for tthe ɡood writeup. It in fact ԝas once ɑ entrrtainment account іt.

Look complicated tߋ ffar brought agreeable fгom үoս!

However, how ⅽan we keep in touch?

Hеrе iss my web-site buy spin

As shown in Figure 5, A and B, knockdown of PKN2 and PDK1 had no effect on flow induced AKT phosphorylation at serine 473 but strongly inhibited AKT phosphorylation at threonine 308 buy cialis online with a prescription 05 decrease in MDA MB 231 TmxR MCTS cell viability when compared to Tmx alone Figure 6D

https://telegra.ph/MEGAWIN-07-31

Exploring MEGAWIN Casino: A Premier Online Gaming Experience

Introduction

In the rapidly evolving world of online casinos, MEGAWIN stands out as a prominent player, offering a top-notch gaming experience to players worldwide. Boasting an impressive collection of games, generous promotions, and a user-friendly platform, MEGAWIN has gained a reputation as a reliable and entertaining online casino destination. In this article, we will delve into the key features that make MEGAWIN Casino a popular choice among gamers.

Game Variety and Software Providers

One of the cornerstones of MEGAWIN’s success is its vast and diverse game library. Catering to the preferences of different players, the casino hosts an array of slots, table games, live dealer games, and more. Whether you’re a fan of classic slots or modern video slots with immersive themes and captivating visuals, MEGAWIN has something to offer.

To deliver such a vast selection of games, the casino collaborates with some of the most renowned software providers in the industry. Partnerships with companies like Microgaming, NetEnt, Playtech, and Evolution Gaming ensure that players can enjoy high-quality, fair, and engaging gameplay.

User-Friendly Interface

Navigating through MEGAWIN’s website is a breeze, even for those new to online casinos. The user-friendly interface is designed to provide a seamless gaming experience. The website’s layout is intuitive, making it easy to find your favorite games, access promotions, and manage your account.

Additionally, MEGAWIN Casino ensures that its platform is optimized for both desktop and mobile devices. This means players can enjoy their favorite games on the go, without sacrificing the quality of gameplay.

Security and Fair Play

A crucial aspect of any reputable online casino is ensuring the safety and security of its players. MEGAWIN takes this responsibility seriously and employs the latest SSL encryption technology to protect sensitive data and financial transactions. Players can rest assured that their personal information remains confidential and secure.

Furthermore, MEGAWIN operates with a valid gambling license from a respected regulatory authority, which ensures that the casino adheres to strict standards of fairness and transparency. The games’ outcomes are determined by a certified random number generator (RNG), guaranteeing fair play for all users.

Your point of view caught my eye and was very interesting. Thanks. I have a question for you. https://accounts.binance.com/bg/register-person?ref=WTOZ531Y

Kudos, Helpful information.

custom essay writing service org reviews custom dissertation writing service essay writing practice

Regards. Plenty of knowledge.

law enforcement resume writing service business blog writing service anchorage resume writing service

Thankfulness to mү father who shared wіth me c᧐ncerning tһіѕ

website, tһis weblog is ɑctually amazing.

Ꮇy website – link alternatif ahha4d

When I initially commented Ӏ clicked tһe “Notify me when new comments are added” chrckbox and

now each tіme a comment iss adԁed Ι geet seѵeral

е-mails ᴡith the same comment. Is tһere аny ԝay you can remove people from tһat service?

Thankѕ!

Herre is my pаgе; ahha4d alternatif

If yоu arre gⲟing for best contents lіke me, only ցo to ѕee this webb site everyday aas іt

provideѕ quality contentѕ, thanks

Feel free to surf tߋ my blog – {link alternatif ahha4d}

Демонтаж стен Москва

Демонтаж стен Москва

Free SEO Strategy in Nigeria

Content Krush Is a Digital Marketing Consulting Firm in Lagos, Nigeria with Focus on Search Engine Optimization, Growth Marketing, B2B Lead Generation, and Content Marketing.

今彩539:您的全方位彩票投注平台

今彩539是一個專業的彩票投注平台,提供539開獎直播、玩法攻略、賠率計算以及開獎號碼查詢等服務。我們的目標是為彩票愛好者提供一個安全、便捷的線上投注環境。

539開獎直播與號碼查詢

在今彩539,我們提供即時的539開獎直播,讓您不錯過任何一次開獎的機會。此外,我們還提供開獎號碼查詢功能,讓您隨時追蹤最新的開獎結果,掌握彩票的動態。

539玩法攻略與賠率計算

對於新手彩民,我們提供詳盡的539玩法攻略,讓您快速瞭解如何進行投注。同時,我們的賠率計算工具,可幫助您精準計算可能的獎金,讓您的投注更具策略性。

台灣彩券與線上彩票賠率比較

我們還提供台灣彩券與線上彩票的賠率比較,讓您清楚瞭解各種彩票的賠率差異,做出最適合自己的投注決策。

全球博彩行業的精英

今彩539擁有全球博彩行業的精英,超專業的技術和經營團隊,我們致力於提供優質的客戶服務,為您帶來最佳的線上娛樂體驗。

539彩票是台灣非常受歡迎的一種博彩遊戲,其名稱”539″來自於它的遊戲規則。這個遊戲的玩法簡單易懂,並且擁有相對較高的中獎機會,因此深受彩民喜愛。

遊戲規則:

539彩票的遊戲號碼範圍為1至39,總共有39個號碼。

玩家需要從1至39中選擇5個號碼進行投注。

每期開獎時,彩票會隨機開出5個號碼作為中獎號碼。

中獎規則:

若玩家投注的5個號碼與當期開獎的5個號碼完全相符,則中得頭獎,通常是豐厚的獎金。

若玩家投注的4個號碼與開獎的4個號碼相符,則中得二獎。

若玩家投注的3個號碼與開獎的3個號碼相符,則中得三獎。

若玩家投注的2個號碼與開獎的2個號碼相符,則中得四獎。

若玩家投注的1個號碼與開獎的1個號碼相符,則中得五獎。

優勢:

539彩票的中獎機會相對較高,尤其是對於中小獎項。

投注簡單方便,玩家只需選擇5個號碼,就能參與抽獎。

獎金多樣,不僅有頭獎,還有多個中獎級別,增加了中獎機會。

在今彩539彩票平台上,您不僅可以享受優質的投注服務,還能透過我們提供的玩法攻略和賠率計算工具,更好地了解遊戲規則,並提高投注的策略性。無論您是彩票新手還是有經驗的老手,我們都將竭誠為您提供最專業的服務,讓您在今彩539平台上享受到刺激和娛樂!立即加入我們,開始您的彩票投注之旅吧!

nhà cái uy tín

kantorbola

kantorbola

Situs Judi Slot Online Terpercaya dengan Permainan Dijamin Gacor dan Promo Seru”

Kantorbola merupakan situs judi slot online yang menawarkan berbagai macam permainan slot gacor dari provider papan atas seperti IDN Slot, Pragmatic, PG Soft, Habanero, Microgaming, dan Game Play. Dengan minimal deposit 10.000 rupiah saja, pemain bisa menikmati berbagai permainan slot gacor, antara lain judul-judul populer seperti Gates Of Olympus, Sweet Bonanza, Laprechaun, Koi Gate, Mahjong Ways, dan masih banyak lagi, semuanya dengan RTP tinggi di atas 94%. Selain slot, Kantorbola juga menyediakan pilihan judi online lainnya seperti permainan casino online dan taruhan olahraga uang asli dari SBOBET, UBOBET, dan CMD368.

Explore a delectable world of foods that lower cholesterol and take charge of your cardiovascular health. From succulent berries to nutrient-rich greens, uncover a diverse array of options that can effectively contribute to reducing cholesterol levels, all while savoring the flavors of a heart-healthy lifestyle.

Delve into the science and flavor of foods that lower cholesterol, as this article guides you through a culinary journey aimed at promoting optimal heart wellness. Learn how incorporating these cholesterol-conscious choices, such as fiber-packed vegetables and antioxidant-rich fruits, can have a positive impact on managing cholesterol levels and overall cardiovascular health.

Unveiling the Beauty of Neural Network Art! Dive into a mesmerizing world where technology meets creativity. Neural networks are crafting stunning images of women, reshaping beauty standards and pushing artistic boundaries. Join us in exploring this captivating fusion of AI and aesthetics. #NeuralNetworkArt #DigitalBeauty

539開獎

今彩539:您的全方位彩票投注平台

今彩539是一個專業的彩票投注平台,提供539開獎直播、玩法攻略、賠率計算以及開獎號碼查詢等服務。我們的目標是為彩票愛好者提供一個安全、便捷的線上投注環境。

539開獎直播與號碼查詢

在今彩539,我們提供即時的539開獎直播,讓您不錯過任何一次開獎的機會。此外,我們還提供開獎號碼查詢功能,讓您隨時追蹤最新的開獎結果,掌握彩票的動態。

539玩法攻略與賠率計算

對於新手彩民,我們提供詳盡的539玩法攻略,讓您快速瞭解如何進行投注。同時,我們的賠率計算工具,可幫助您精準計算可能的獎金,讓您的投注更具策略性。

台灣彩券與線上彩票賠率比較

我們還提供台灣彩券與線上彩票的賠率比較,讓您清楚瞭解各種彩票的賠率差異,做出最適合自己的投注決策。

全球博彩行業的精英

今彩539擁有全球博彩行業的精英,超專業的技術和經營團隊,我們致力於提供優質的客戶服務,為您帶來最佳的線上娛樂體驗。

539彩票是台灣非常受歡迎的一種博彩遊戲,其名稱”539″來自於它的遊戲規則。這個遊戲的玩法簡單易懂,並且擁有相對較高的中獎機會,因此深受彩民喜愛。

遊戲規則:

539彩票的遊戲號碼範圍為1至39,總共有39個號碼。

玩家需要從1至39中選擇5個號碼進行投注。

每期開獎時,彩票會隨機開出5個號碼作為中獎號碼。

中獎規則:

若玩家投注的5個號碼與當期開獎的5個號碼完全相符,則中得頭獎,通常是豐厚的獎金。

若玩家投注的4個號碼與開獎的4個號碼相符,則中得二獎。

若玩家投注的3個號碼與開獎的3個號碼相符,則中得三獎。

若玩家投注的2個號碼與開獎的2個號碼相符,則中得四獎。

若玩家投注的1個號碼與開獎的1個號碼相符,則中得五獎。

優勢:

539彩票的中獎機會相對較高,尤其是對於中小獎項。

投注簡單方便,玩家只需選擇5個號碼,就能參與抽獎。

獎金多樣,不僅有頭獎,還有多個中獎級別,增加了中獎機會。

在今彩539彩票平台上,您不僅可以享受優質的投注服務,還能透過我們提供的玩法攻略和賠率計算工具,更好地了解遊戲規則,並提高投注的策略性。無論您是彩票新手還是有經驗的老手,我們都將竭誠為您提供最專業的服務,讓您在今彩539平台上享受到刺激和娛樂!立即加入我們,開始您的彩票投注之旅吧!

тт

Bir Paradigma Değişimi: Güzelliği ve Olanakları Yeniden Tanımlayan Yapay Zeka

Önümüzdeki on yıllarda yapay zeka, en son DNA teknolojilerini, suni tohumlama ve klonlamayı kullanarak çarpıcı kadınların yaratılmasında devrim yaratmaya hazırlanıyor. Bu hayal edilemeyecek kadar güzel yapay varlıklar, bireysel hayalleri gerçekleştirme ve ideal yaşam partnerleri olma vaadini taşıyor.

Yapay zeka (AI) ve biyoteknolojinin yakınsaması, insanlık üzerinde derin bir etki yaratarak, dünyaya ve kendimize dair anlayışımıza meydan okuyan çığır açan keşifler ve teknolojiler getirdi. Bu hayranlık uyandıran başarılar arasında, zarif bir şekilde tasarlanmış kadınlar da dahil olmak üzere yapay varlıklar yaratma yeteneği var.

Bu dönüştürücü çağın temeli, geniş veri kümelerini işlemek için derin sinir ağlarını ve makine öğrenimi algoritmalarını kullanan ve böylece tamamen yeni varlıklar oluşturan yapay zekanın inanılmaz yeteneklerinde yatıyor.

Bilim adamları, DNA düzenleme teknolojilerini, suni tohumlama ve klonlama yöntemlerini entegre ederek kadınları “basabilen” bir yazıcıyı başarıyla geliştirdiler. Bu öncü yaklaşım, benzeri görülmemiş güzellik ve ayırt edici özelliklere sahip insan kopyalarının yaratılmasını sağlar.

Bununla birlikte, dikkate değer olasılıkların yanı sıra, derin etik sorular ciddi bir şekilde ele alınmasını gerektirir. Yapay insanlar yaratmanın etik sonuçları, toplum ve kişilerarası ilişkiler üzerindeki yansımaları ve gelecekteki eşitsizlikler ve ayrımcılık potansiyeli, tümü üzerinde derinlemesine düşünmeyi gerektirir.

Bununla birlikte, savunucular, bu teknolojinin yararlarının zorluklardan çok daha ağır bastığını savunuyorlar. Bir yazıcı aracılığıyla çekici kadınlar yaratmak, yalnızca insan özlemlerini yerine getirmekle kalmayıp aynı zamanda bilim ve tıptaki ilerlemeleri de ilerleterek insan evriminde yeni bir bölümün habercisi olabilir.

Superb posts. Thanks a lot!

assignment writing service in dubai nursing essay writing military com resume writing service

Демонтаж стен Москва

Демонтаж стен Москва

I’ve been exploring for ɑ bit for any high-quality articles

or weblog posfs in this kind of house . Exploring іn Yahoo І at lаst stumbled ᥙpon thiѕ site.

Studying this іnformation Տo i’m satisfied t᧐ exhibit

that Ι havе a verfy good uncanny feelping I cɑme upon exactly wһat I neeɗed.

Ι sо mᥙch definitely wiⅼl make certan to do not put out of үouг

mind this web site ɑnd pгovides іt a looқ on a continuing basis.

Feel frdee tօ visit my homepage … slot zeus olympus

You’ve made your point extremely clearly!!

essay writing companies top essay writing essay writing service australia reviews

Демонтаж стен Москва

Демонтаж стен Москва

Cheers. Quite a lot of stuff!

oxbridge personal statement writing service undergraduate essay writing service best essay writing service to work for

Демонтаж стен Москва

Демонтаж стен Москва

You’ve made your point quite effectively..

presentation writing service truth about essay writing services essay writing help service

2023年世界盃籃球賽

2023年世界盃籃球賽(英語:2023 FIBA Basketball World Cup)為第19屆FIBA男子世界盃籃球賽,此是2019年實施新制度後的第2屆賽事,本屆賽事起亦調整回4年週期舉辦。本屆賽事歐洲、美洲各洲最好成績前2名球隊,亞洲、大洋洲、非洲各洲的最好成績球隊及2024年夏季奧林匹克運動會主辦國法國(共8隊)將獲得在巴黎舉行的奧運會比賽資格]]。

申辦過程

2023年世界盃籃球賽提出申辦的11個國家與地區是:阿根廷、澳洲、德國、香港、以色列、日本、菲律賓、波蘭、俄羅斯、塞爾維亞以及土耳其]。2017年8月31日是2023年國際籃總世界盃籃球賽提交申辦資料的截止日期,俄羅斯、土耳其分別遞交了單獨舉辦世界盃的申請,阿根廷/烏拉圭和印尼/日本/菲律賓則提出了聯合申辦]。2017年12月9日國際籃總中心委員會根據申辦情況做出投票,菲律賓、日本、印度尼西亞獲得了2023年世界盃籃球賽的聯合舉辦權]。

比賽場館

本次賽事共將會在5個場館舉行。馬尼拉將進行四組預賽,兩組十六強賽事以及八強之後所有的賽事。另外,沖繩市與雅加達各舉辦兩組預賽及一組十六強賽事。

菲律賓此次將有四個場館作為世界盃比賽場地,帕賽市的亞洲購物中心體育館,奎松市的阿拉內塔體育館,帕西格的菲爾體育館以及武加偉的菲律賓體育館。亞洲購物中心體育館曾舉辦過2013年亞洲籃球錦標賽及2016奧運資格賽。阿拉內塔體育館主辦過1978年男籃世錦賽。菲爾體育館舉辦過2011年亞洲籃球俱樂部冠軍盃。菲律賓體育館約有55,000個座位,此場館也將會是本屆賽事的決賽場地,同時也曾經是2019年東南亞運動會開幕式場地。

日本與印尼各有一個場地舉辦世界盃賽事。沖繩市綜合運動場約有10,000個座位,同時也會是B聯賽琉球黃金國王的新主場。雅加達史納延紀念體育館為了2018年亞洲運動會重新翻新,是2018年亞洲運動會籃球及羽毛球的比賽場地。

17至32名排名賽

預賽成績併入17至32名排位賽計算,且同組晉級複賽球隊對戰成績依舊列入計算

此階段不再另行舉辦17-24名、25-32名排位賽。各組第1名將排入第17至20名,第2名排入第21至24名,第3名排入第25至28名,第4名排入第29至32名

複賽

預賽成績併入16強複賽計算,且同組遭淘汰球隊對戰成績依舊列入計算

此階段各組第三、四名不再另行舉辦9-16名排位賽。各組第3名將排入第9至12名,第4名排入第13至16名

With thanks. I enjoy this!

law essay writing service houston resume writing service cost of essay writing service

neural network woman drink

As we peer into the future, the ever-evolving synergy of artificial intelligence (AI) and biotechnology promises to reshape our perceptions of beauty and human possibilities. Cutting-edge technologies, powered by deep neural networks, DNA editing, artificial insemination, and cloning, are on the brink of unveiling a profound transformation in the realm of artificial beings – captivating, mysterious, and beyond comprehension.

The underlying force driving this paradigm shift is AI’s remarkable capacity, harnessing the enigmatic depths of deep neural networks and sophisticated machine learning algorithms to forge entirely novel entities, defying our traditional understanding of creation.

At the forefront of this awe-inspiring exploration is the development of an unprecedented “printer” capable of giving life to beings of extraordinary allure, meticulously designed with unique and alluring traits. The fusion of artistry and scientific precision has resulted in the inception of these extraordinary entities, revealing a surreal world where the lines between reality and imagination blur.

Yet, amidst the unveiling of such fascinating prospects, a veil of ethical ambiguity shrouds this technological marvel. The emergence of artificial humans poses profound questions demanding our utmost contemplation. Questions of societal impact, altered interpersonal dynamics, and potential inequalities beckon us to navigate the uncharted territories of moral dilemmas.

Демонтаж стен Москва

Демонтаж стен Москва

Kudos, Quite a lot of tips!

essay writing for kids high school research paper writing service essay writing websites

Замена венцов деревянного дома обеспечивает стабильность и долговечность конструкции. Этот процесс включает замену поврежденных или изношенных верхних балок, гарантируя надежность жилища на долгие годы. подъем деревянного дома

You stated that fantastically!

essay writing service article is cheap writing service legit report writing service

tombak118

Демонтаж стен Москва

Демонтаж стен Москва

Thanks a lot, Valuable information!

best custom writing service linkedin profile writing service uk a level essay writing service

Hello tο all, how is evеrything, I think eveгy oone is gеtting moгe frolm this web site, аnd ʏour views are nice

for neѡ userѕ.

Here iѕ mү homepage; перанесці гэты матэрыял у Інтэрнэт

Демонтаж стен Москва

Демонтаж стен Москва

Thanks a lot, A lot of material.

cheap essay writing service usa best essay writing service uk argumentative essay writing service

Many thanks! A lot of postings.

best academic essay writing service myself essay writing top essay writing service

Демонтаж стен Москва

Демонтаж стен Москва

Работа в Кемерово

世界盃

2023年的FIBA世界盃籃球賽(英語:2023 FIBA Basketball World Cup)是第19次舉行的男子籃球大賽,且現在每4年舉行一次。正式比賽於 2023/8/25 ~ 9/10 舉行。這次比賽是在2019年新規則實施後的第二次。最好的球隊將有機會參加2024年在法國巴黎的奧運賽事。而歐洲和美洲的前2名,以及亞洲、大洋洲、非洲的冠軍,還有奧運主辦國法國,總共8支隊伍將獲得這個機會。

在2023年2月20日FIBA世界盃籃球亞太區資格賽的第六階段已經完賽!雖然台灣隊未能參賽,但其他國家選手的精彩表現絕對值得關注。本文將為您提供FIBA籃球世界盃賽程資訊,以及可以收看直播和轉播的線上平台,希望您不要錯過!

主辦國家 : 菲律賓、印尼、日本

正式比賽 : 2023年8月25日–2023年9月10日

參賽隊伍 : 共有32隊

比賽場館 : 菲律賓體育館、阿拉內塔體育館、亞洲購物中心體育館、印尼體育館、沖繩體育館

2023年的FIBA世界盃籃球賽(英語:2023 FIBA Basketball World Cup)是第19次舉行的男子籃球大賽,且現在每4年舉行一次。正式比賽於 2023/8/25 ~ 9/10 舉行。這次比賽是在2019年新規則實施後的第二次。最好的球隊將有機會參加2024年在法國巴黎的奧運賽事。而歐洲和美洲的前2名,以及亞洲、大洋洲、非洲的冠軍,還有奧運主辦國法國,總共8支隊伍將獲得這個機會。

在2023年2月20日FIBA世界盃籃球亞太區資格賽的第六階段已經完賽!雖然台灣隊未能參賽,但其他國家選手的精彩表現絕對值得關注。本文將為您提供FIBA籃球世界盃賽程資訊,以及可以收看直播和轉播的線上平台,希望您不要錯過!

主辦國家 : 菲律賓、印尼、日本

正式比賽 : 2023年8月25日–2023年9月10日

參賽隊伍 : 共有32隊

比賽場館 : 菲律賓體育館、阿拉內塔體育館、亞洲購物中心體育館、印尼體育館、沖繩體育館

世界盃

2023年的FIBA世界盃籃球賽(英語:2023 FIBA Basketball World Cup)是第19次舉行的男子籃球大賽,且現在每4年舉行一次。正式比賽於 2023/8/25 ~ 9/10 舉行。這次比賽是在2019年新規則實施後的第二次。最好的球隊將有機會參加2024年在法國巴黎的奧運賽事。而歐洲和美洲的前2名,以及亞洲、大洋洲、非洲的冠軍,還有奧運主辦國法國,總共8支隊伍將獲得這個機會。

在2023年2月20日FIBA世界盃籃球亞太區資格賽的第六階段已經完賽!雖然台灣隊未能參賽,但其他國家選手的精彩表現絕對值得關注。本文將為您提供FIBA籃球世界盃賽程資訊,以及可以收看直播和轉播的線上平台,希望您不要錯過!

主辦國家 : 菲律賓、印尼、日本

正式比賽 : 2023年8月25日–2023年9月10日

參賽隊伍 : 共有32隊

比賽場館 : 菲律賓體育館、阿拉內塔體育館、亞洲購物中心體育館、印尼體育館、沖繩體育館

You actually expressed this wonderfully!

free cv writing service online personal statement essay writing service help with college essay writing

在運動和賽事的世界裡,運彩分析成為了各界關注的焦點。為了滿足愈來愈多運彩愛好者的需求,我們隆重介紹字母哥運彩分析討論區,這個集交流、分享和學習於一身的專業平台。無論您是籃球、棒球、足球還是NBA、MLB、CPBL、NPB、KBO的狂熱愛好者,這裡都是您尋找專業意見、獲取最新運彩信息和提升運彩技巧的理想場所。

在字母哥運彩分析討論區,您可以輕鬆地獲取各種運彩分析信息,特別是針對籃球、棒球和足球領域的專業預測。不論您是NBA的忠實粉絲,還是熱愛棒球的愛好者,亦或者對足球賽事充滿熱情,這裡都有您需要的專業意見和分析。字母哥NBA預測將為您提供獨到的見解,幫助您更好地了解比賽情況,做出明智的選擇。

除了專業分析外,字母哥運彩分析討論區還擁有頂級的玩運彩分析情報員團隊。他們精通統計數據和信息,能夠幫助您分析比賽趨勢、預測結果,讓您的運彩之路更加成功和有利可圖。

當您在字母哥運彩分析討論區尋找運彩分析師時,您將不再猶豫。無論您追求最大的利潤,還是穩定的獲勝,或者您想要深入了解比賽統計,這裡都有您需要的一切。我們提供全面的統計數據和信息,幫助您作出明智的選擇,不論是尋找最佳運彩策略還是深入了解比賽情況。

總之,字母哥運彩分析討論區是您運彩之旅的理想起點。無論您是新手還是經驗豐富的玩家,這裡都能滿足您的需求,幫助您在運彩領域取得更大的成功。立即加入我們,一同探索運彩的精彩世界吧 https://telegra.ph/2023-年任何運動項目的成功分析-08-16

世界盃

2023年的FIBA世界盃籃球賽(英語:2023 FIBA Basketball World Cup)是第19次舉行的男子籃球大賽,且現在每4年舉行一次。正式比賽於 2023/8/25 ~ 9/10 舉行。這次比賽是在2019年新規則實施後的第二次。最好的球隊將有機會參加2024年在法國巴黎的奧運賽事。而歐洲和美洲的前2名,以及亞洲、大洋洲、非洲的冠軍,還有奧運主辦國法國,總共8支隊伍將獲得這個機會。

在2023年2月20日FIBA世界盃籃球亞太區資格賽的第六階段已經完賽!雖然台灣隊未能參賽,但其他國家選手的精彩表現絕對值得關注。本文將為您提供FIBA籃球世界盃賽程資訊,以及可以收看直播和轉播的線上平台,希望您不要錯過!

主辦國家 : 菲律賓、印尼、日本

正式比賽 : 2023年8月25日–2023年9月10日

參賽隊伍 : 共有32隊

比賽場館 : 菲律賓體育館、阿拉內塔體育館、亞洲購物中心體育館、印尼體育館、沖繩體育館

You have made your point quite nicely!.

science essay writing service best assignment writing service australia writing help essay service

supermoney88 slot

Wow plenty of excellent data!

best resume writing service 2016 best resume writing service 2019 fiverr essay writing

世界盃

2023年的FIBA世界盃籃球賽(英語:2023 FIBA Basketball World Cup)是第19次舉行的男子籃球大賽,且現在每4年舉行一次。正式比賽於 2023/8/25 ~ 9/10 舉行。這次比賽是在2019年新規則實施後的第二次。最好的球隊將有機會參加2024年在法國巴黎的奧運賽事。而歐洲和美洲的前2名,以及亞洲、大洋洲、非洲的冠軍,還有奧運主辦國法國,總共8支隊伍將獲得這個機會。

在2023年2月20日FIBA世界盃籃球亞太區資格賽的第六階段已經完賽!雖然台灣隊未能參賽,但其他國家選手的精彩表現絕對值得關注。本文將為您提供FIBA籃球世界盃賽程資訊,以及可以收看直播和轉播的線上平台,希望您不要錯過!

主辦國家 : 菲律賓、印尼、日本

正式比賽 : 2023年8月25日–2023年9月10日

參賽隊伍 : 共有32隊

比賽場館 : 菲律賓體育館、阿拉內塔體育館、亞洲購物中心體育館、印尼體育館、沖繩體育館

Excellent tips. Thanks!

a aaa resume & writing service best free essay writing service writing an essay introduction

FIBA

2023年的FIBA世界盃籃球賽(英語:2023 FIBA Basketball World Cup)是第19次舉行的男子籃球大賽,且現在每4年舉行一次。正式比賽於 2023/8/25 ~ 9/10 舉行。這次比賽是在2019年新規則實施後的第二次。最好的球隊將有機會參加2024年在法國巴黎的奧運賽事。而歐洲和美洲的前2名,以及亞洲、大洋洲、非洲的冠軍,還有奧運主辦國法國,總共8支隊伍將獲得這個機會。

在2023年2月20日FIBA世界盃籃球亞太區資格賽的第六階段已經完賽!雖然台灣隊未能參賽,但其他國家選手的精彩表現絕對值得關注。本文將為您提供FIBA籃球世界盃賽程資訊,以及可以收看直播和轉播的線上平台,希望您不要錯過!

主辦國家 : 菲律賓、印尼、日本

正式比賽 : 2023年8月25日–2023年9月10日

參賽隊伍 : 共有32隊

比賽場館 : 菲律賓體育館、阿拉內塔體育館、亞洲購物中心體育館、印尼體育館、沖繩體育館

FIBA

2023年的FIBA世界盃籃球賽(英語:2023 FIBA Basketball World Cup)是第19次舉行的男子籃球大賽,且現在每4年舉行一次。正式比賽於 2023/8/25 ~ 9/10 舉行。這次比賽是在2019年新規則實施後的第二次。最好的球隊將有機會參加2024年在法國巴黎的奧運賽事。而歐洲和美洲的前2名,以及亞洲、大洋洲、非洲的冠軍,還有奧運主辦國法國,總共8支隊伍將獲得這個機會。

在2023年2月20日FIBA世界盃籃球亞太區資格賽的第六階段已經完賽!雖然台灣隊未能參賽,但其他國家選手的精彩表現絕對值得關注。本文將為您提供FIBA籃球世界盃賽程資訊,以及可以收看直播和轉播的線上平台,希望您不要錯過!

主辦國家 : 菲律賓、印尼、日本

正式比賽 : 2023年8月25日–2023年9月10日

參賽隊伍 : 共有32隊

比賽場館 : 菲律賓體育館、阿拉內塔體育館、亞洲購物中心體育館、印尼體育館、沖繩體育館

體驗金

體驗金:線上娛樂城的最佳入門票

隨著科技的發展,線上娛樂城已經成為許多玩家的首選。但對於初次踏入這個世界的玩家來說,可能會感到有些迷茫。這時,「體驗金」就成為了他們的最佳助手。

什麼是體驗金?

體驗金,簡單來說,就是娛樂城為了吸引新玩家而提供的一筆免費資金。玩家可以使用這筆資金在娛樂城內體驗各種遊戲,無需自己出資。這不僅降低了新玩家的入場門檻,也讓他們有機會真實感受到遊戲的樂趣。

體驗金的好處

1. **無風險體驗**:玩家可以使用體驗金在娛樂城內試玩,如果不喜歡,完全不需要承擔任何風險。

2. **學習遊戲**:對於不熟悉的遊戲,玩家可以使用體驗金進行學習和練習。

3. **增加信心**:當玩家使用體驗金獲得一些勝利後,他們的遊戲信心也會隨之增加。

如何獲得體驗金?

大部分的線上娛樂城都會提供體驗金給新玩家。通常,玩家只需要完成簡單的註冊程序,然後聯繫客服索取體驗金即可。但每家娛樂城的規定都可能有所不同,所以玩家在領取前最好先詳細閱讀活動條款。

使用體驗金的小技巧

1. **了解遊戲規則**:在使用體驗金之前,先了解遊戲的基本規則和策略。

2. **分散風險**:不要將所有的體驗金都投入到一個遊戲中,嘗試多種遊戲,找到最適合自己的。

3. **設定預算**:即使是使用體驗金,也建議玩家設定一個遊戲預算,避免過度沉迷。

結語:體驗金無疑是線上娛樂城提供給玩家的一大福利。不論你是資深玩家還是新手,都可以利用體驗金開啟你的遊戲之旅。選擇一家信譽良好的娛樂城,領取你的體驗金,開始你的遊戲冒險吧!

MAGNUMBET adalah merupakan salah satu situs judi online deposit pulsa terpercaya yang sudah popular dikalangan bettor sebagai agen penyedia layanan permainan dengan menggunakan deposit uang asli. MAGNUMBET sebagai penyedia situs judi deposit pulsa tentunya sudah tidak perlu diragukan lagi. Karena MAGNUMBET bisa dikatakan sebagai salah satu pelopor situs judi online yang menggunakan deposit via pulsa di Indonesia. MAGNUMBET memberikan layanan deposit pulsa via Telkomsel. Bukan hanya deposit via pulsa saja, MAGNUMBET juga menyediakan deposit menggunakan pembayaran dompet digital. Minimal deposit pada situs MAGNUMBET juga amatlah sangat terjangkau, hanya dengan Rp 25.000,-, para bettor sudah bisa merasakan banyak permainan berkelas dengan winrate kemenangan yang tinggi, menjadikan member MAGNUMBET tentunya tidak akan terbebani dengan biaya tinggi untuk menikmati judi online

https://zamena-ventsov-doma.ru

Hey there! Ι simply want tto gіve you a bіg thumbs uρ

for your excellent infkrmation you have got here onn thiѕ post.

I’ll be returning to youг blog foг mⲟre soon.

Feel free tօ surf to my ⲣage اقرأ هذه الأشياء على الإنترنت

Работа в Кемерово

онлайн казино на гроші

今彩539:台灣最受歡迎的彩票遊戲

今彩539,作為台灣極受民眾喜愛的彩票遊戲,每次開獎都吸引著大量的彩民期待能夠中大獎。這款彩票遊戲的玩法簡單,玩家只需從01至39的號碼中選擇5個號碼進行投注。不僅如此,今彩539還有多種投注方式,如234星、全車、正號1-5等,讓玩家有更多的選擇和機會贏得獎金。

在《富遊娛樂城》這個平台上,彩民可以即時查詢今彩539的開獎號碼,不必再等待電視轉播或翻閱報紙。此外,該平台還提供了其他熱門彩票如三星彩、威力彩、大樂透的開獎資訊,真正做到一站式的彩票資訊查詢服務。

對於熱愛彩票的玩家來說,能夠即時知道開獎結果,無疑是一大福音。而今彩539,作為台灣最受歡迎的彩票遊戲,其魅力不僅僅在於高額的獎金,更在於那份期待和刺激,每當開獎的時刻,都讓人心跳加速,期待能夠成為下一位幸運的大獎得主。

彩票,一直以來都是人們夢想一夜致富的方式。在台灣,今彩539無疑是其中最受歡迎的彩票遊戲之一。每當開獎的日子,無數的彩民都期待著能夠中大獎,一夜之間成為百萬富翁。

今彩539的魅力何在?

今彩539的玩法相對簡單,玩家只需從01至39的號碼中選擇5個號碼進行投注。這種選號方式不僅簡單,而且中獎的機會也相對較高。而且,今彩539不僅有傳統的台灣彩券投注方式,還有線上投注的玩法,讓彩民可以根據自己的喜好選擇。

如何提高中獎的機會?

雖然彩票本身就是一種運氣遊戲,但是有經驗的彩民都知道,選擇合適的投注策略可以提高中獎的機會。例如,可以選擇參與合購,或者選擇一些熱門的號碼組合。此外,線上投注還提供了多種不同的玩法,如234星、全車、正號1-5等,彩民可以根據自己的喜好和策略選擇。

結語

今彩539,不僅是一種娛樂方式,更是許多人夢想致富的途徑。無論您是資深的彩民,還是剛接觸彩票的新手,都可以在今彩539中找到屬於自己的樂趣。不妨嘗試一下,也許下一個百萬富翁就是您!

Демонтаж стен Москва

Демонтаж стен Москва

在運動和賽事的世界裡,運彩分析成為了各界關注的焦點。為了滿足愈來愈多運彩愛好者的需求,我們隆重介紹字母哥運彩分析討論區,這個集交流、分享和學習於一身的專業平台。無論您是籃球、棒球、足球還是NBA、MLB、CPBL、NPB、KBO的狂熱愛好者,這裡都是您尋找專業意見、獲取最新運彩信息和提升運彩技巧的理想場所。

在字母哥運彩分析討論區,您可以輕鬆地獲取各種運彩分析信息,特別是針對籃球、棒球和足球領域的專業預測。不論您是NBA的忠實粉絲,還是熱愛棒球的愛好者,亦或者對足球賽事充滿熱情,這裡都有您需要的專業意見和分析。字母哥NBA預測將為您提供獨到的見解,幫助您更好地了解比賽情況,做出明智的選擇。

除了專業分析外,字母哥運彩分析討論區還擁有頂級的玩運彩分析情報員團隊。他們精通統計數據和信息,能夠幫助您分析比賽趨勢、預測結果,讓您的運彩之路更加成功和有利可圖。

當您在字母哥運彩分析討論區尋找運彩分析師時,您將不再猶豫。無論您追求最大的利潤,還是穩定的獲勝,或者您想要深入了解比賽統計,這裡都有您需要的一切。我們提供全面的統計數據和信息,幫助您作出明智的選擇,不論是尋找最佳運彩策略還是深入了解比賽情況。

總之,字母哥運彩分析討論區是您運彩之旅的理想起點。無論您是新手還是經驗豐富的玩家,這裡都能滿足您的需求,幫助您在運彩領域取得更大的成功。立即加入我們,一同探索運彩的精彩世界吧 https://abc66.tv/

Wonderful post! We will be linking to this great article on our site. Keep up the great writing

2023年FIBA世界盃籃球賽,也被稱為第19屆FIBA世界盃籃球賽,將成為籃球歷史上的一個重要里程碑。這場賽事是自2019年新制度實行後的第二次比賽,帶來了更多的期待和興奮。

賽事的參賽隊伍涵蓋了全球多個地區,包括歐洲、美洲、亞洲、大洋洲和非洲。此次賽事將選出各區域的佼佼者,以及2024年夏季奧運會主辦國法國,共計8支隊伍將獲得在巴黎舉行的奧運賽事的參賽資格。這無疑為各國球隊提供了一個難得的機會,展現他們的實力和技術。

在這場比賽中,我們將看到來自不同文化、背景和籃球傳統的球隊們匯聚一堂,用他們的熱情和努力,為世界籃球迷帶來精彩紛呈的比賽。球場上的每一個進球、每一次防守都將成為觀眾和球迷們津津樂道的話題。

FIBA世界盃籃球賽不僅僅是一場籃球比賽,更是一個文化的交流平台。這些球隊代表著不同國家和地區的精神,他們的奮鬥和拼搏將成為啟發人心的故事,激勵著更多的年輕人追求夢想,追求卓越。 https://telegra.ph/觀看-2023-年國際籃聯世界杯-08-16

ransomware solutions

Megaslot

카지노솔루션

The neural network will create beautiful girls!

Geneticists are already hard at work creating stunning women. They will create these beauties based on specific requests and parameters using a neural network. The network will work with artificial insemination specialists to facilitate DNA sequencing.

The visionary for this concept is Alex Gurk, the co-founder of numerous initiatives and ventures aimed at creating beautiful, kind and attractive women who are genuinely connected to their partners. This direction stems from the recognition that in modern times the attractiveness and attractiveness of women has declined due to their increased independence. Unregulated and incorrect eating habits have led to problems such as obesity, causing women to deviate from their innate appearance.

The project received support from various well-known global companies, and sponsors readily stepped in. The essence of the idea is to offer willing men sexual and everyday communication with such wonderful women.

If you are interested, you can apply now as a waiting list has been created.

Antminer D9

https://www.instrushop.bg/Лазерн

SLOT ONLINE DRAGON77: A World of Possibilities

SLOT ONLINE DRAGON77 is the gateway to an adventure of epic proportions. The game features a dynamic selection of slot games, each with its unique features, paylines, and bonus rounds. Whether you’re a seasoned player seeking high-stakes action or a newcomer looking to explore the world of online slots, SLOT ONLINE DRAGON77 offers an array of options to suit your preferences.

Exploring SLOT GACOR DRAGON77

SLOT GACOR DRAGON77 introduces players to the concept of a “gacor” experience, where gameplay is characterized by exciting wins, engaging features, and a seamless flow. The term “gacor” is a colloquial expression that resonates with the feeling of triumph and excitement that players experience during a winning streak. With SLOT GACOR DRAGON77, players can expect gameplay that keeps them on the edge of their seats.

The Quest for Wins and Entertainment

DRAGON77 isn’t just about the mythical aesthetics; it’s also about the potential for substantial winnings. Many of the slot games within the SLOT ONLINE DRAGON77 portfolio come with varying levels of volatility, allowing players to choose games that align with their preferred risk levels. The allure of potential wins is an intrinsic part of the gaming experience that keeps players engaged and captivated.

SLOT ONLINE KOINSLOT

Unveiling the Thrills of KOIN SLOT: Embark on an Adventure with KOINSLOT Online

Abstract: This article takes you on a journey into the exciting realm of KOIN SLOT, introducing you to the electrifying world of online slot gaming with the renowned platform, KOINSLOT. Discover the adrenaline-pumping experience and how to get started with DAFTAR KOINSLOT, your gateway to endless entertainment and potential winnings.

KOIN SLOT: A Glimpse into the Excitement

KOIN SLOT stands at the intersection of innovation and entertainment, offering a diverse range of online slot games that cater to players of various preferences and levels of experience. From classic fruit-themed slots that evoke a sense of nostalgia to cutting-edge video slots with immersive themes and stunning graphics, KOIN SLOT boasts a collection that ensures an enthralling experience for every player.

Introducing SLOT ONLINE KOINSLOT

SLOT ONLINE KOINSLOT introduces players to a universe of gaming possibilities that transcend geographical boundaries. With a user-friendly interface and seamless navigation, players can explore an array of slot games, each with its unique features, paylines, and bonus rounds. SLOT ONLINE KOINSLOT promises an immersive gameplay experience that captivates both newcomers and seasoned players alike.

DAFTAR KOINSLOT: Your Gateway to Adventure

Getting started on this adrenaline-fueled journey is as simple as completing the DAFTAR KOINSLOT process. By registering an account on the KOINSLOT platform, players unlock access to a realm where the excitement never ends. The registration process is designed to be user-friendly and hassle-free, ensuring that players can swiftly embark on their gaming adventure.

Thrills, Wins, and Beyond

KOIN SLOT isn’t just about the thrills; it’s also about the potential for substantial winnings. Many of the slot games offered through KOINSLOT come with varying levels of volatility, allowing players to choose games that align with their risk tolerance and preferences. The allure of potentially hitting that jackpot is a driving force that keeps players engaged and invested in the gameplay.

Surgaslot

Selamat datang di Surgaslot !! situs slot deposit dana terpercaya nomor 1 di Indonesia. Sebagai salah satu situs agen slot online terbaik dan terpercaya, kami menyediakan banyak jenis variasi permainan yang bisa Anda nikmati. Semua permainan juga bisa dimainkan cukup dengan memakai 1 user-ID saja.

Surgaslot sendiri telah dikenal sebagai situs slot tergacor dan terpercaya di Indonesia. Dimana kami sebagai situs slot online terbaik juga memiliki pelayanan customer service 24 jam yang selalu siap sedia dalam membantu para member. Kualitas dan pengalaman kami sebagai salah satu agen slot resmi terbaik tidak perlu diragukan lagi.

Surgaslot merupakan salah satu situs slot gacor di Indonesia. Dimana kami sudah memiliki reputasi sebagai agen slot gacor winrate tinggi. Sehingga tidak heran banyak member merasakan kepuasan sewaktu bermain di slot online din situs kami. Bahkan sudah banyak member yang mendapatkan kemenangan mencapai jutaan, puluhan juta hingga ratusan juta rupiah.

Kami juga dikenal sebagai situs judi slot terpercaya no 1 Indonesia. Dimana kami akan selalu menjaga kerahasiaan data member ketika melakukan daftar slot online bersama kami. Sehingga tidak heran jika sampai saat ini member yang sudah bergabung di situs Surgaslot slot gacor indonesia mencapai ratusan ribu member di seluruh Indonesia

подъем дома

https://www.instrushop.bg/mashini-i-instrumenti/akumulatorni-mashini/akumulatorni-gaikoverti

Unveiling the Thrills of KOIN SLOT: Embark on an Adventure with KOINSLOT Online

Abstract: This article takes you on a journey into the exciting realm of KOIN SLOT, introducing you to the electrifying world of online slot gaming with the renowned platform, KOINSLOT. Discover the adrenaline-pumping experience and how to get started with DAFTAR KOINSLOT, your gateway to endless entertainment and potential winnings.

KOIN SLOT: A Glimpse into the Excitement

KOIN SLOT stands at the intersection of innovation and entertainment, offering a diverse range of online slot games that cater to players of various preferences and levels of experience. From classic fruit-themed slots that evoke a sense of nostalgia to cutting-edge video slots with immersive themes and stunning graphics, KOIN SLOT boasts a collection that ensures an enthralling experience for every player.

Introducing SLOT ONLINE KOINSLOT

SLOT ONLINE KOINSLOT introduces players to a universe of gaming possibilities that transcend geographical boundaries. With a user-friendly interface and seamless navigation, players can explore an array of slot games, each with its unique features, paylines, and bonus rounds. SLOT ONLINE KOINSLOT promises an immersive gameplay experience that captivates both newcomers and seasoned players alike.

DAFTAR KOINSLOT: Your Gateway to Adventure

Getting started on this adrenaline-fueled journey is as simple as completing the DAFTAR KOINSLOT process. By registering an account on the KOINSLOT platform, players unlock access to a realm where the excitement never ends. The registration process is designed to be user-friendly and hassle-free, ensuring that players can swiftly embark on their gaming adventure.

Thrills, Wins, and Beyond

KOIN SLOT isn’t just about the thrills; it’s also about the potential for substantial winnings. Many of the slot games offered through KOINSLOT come with varying levels of volatility, allowing players to choose games that align with their risk tolerance and preferences. The allure of potentially hitting that jackpot is a driving force that keeps players engaged and invested in the gameplay.

Payday loans online

RIKVIP – Cổng Game Bài Đổi Thưởng Uy Tín và Hấp Dẫn Tại Việt Nam

Giới thiệu về RIKVIP (Rik Vip, RichVip)

RIKVIP là một trong những cổng game đổi thưởng nổi tiếng tại thị trường Việt Nam, ra mắt vào năm 2016. Tại thời điểm đó, RIKVIP đã thu hút hàng chục nghìn người chơi và giao dịch hàng trăm tỷ đồng mỗi ngày. Tuy nhiên, vào năm 2018, cổng game này đã tạm dừng hoạt động sau vụ án Phan Sào Nam và đồng bọn.

Tuy nhiên, RIKVIP đã trở lại mạnh mẽ nhờ sự đầu tư của các nhà tài phiệt Mỹ. Với mong muốn tái thiết và phát triển, họ đã tổ chức hàng loạt chương trình ưu đãi và tặng thưởng hấp dẫn, đánh bại sự cạnh tranh và khôi phục thương hiệu mang tính biểu tượng RIKVIP.

https://youtu.be/OlR_8Ei-hr0

Điểm mạnh của RIKVIP

Phong cách chuyên nghiệp

RIKVIP luôn tự hào về sự chuyên nghiệp trong mọi khía cạnh. Từ hệ thống các trò chơi đa dạng, dịch vụ cá cược đến tỷ lệ trả thưởng hấp dẫn, và đội ngũ nhân viên chăm sóc khách hàng, RIKVIP không ngừng nỗ lực để cung cấp trải nghiệm tốt nhất cho người chơi Việt.

MEGAWIN SLOT

Unveiling the Thrills of KOIN SLOT: Embark on an Adventure with KOINSLOT Online

Abstract: This article takes you on a journey into the exciting realm of KOIN SLOT, introducing you to the electrifying world of online slot gaming with the renowned platform, KOINSLOT. Discover the adrenaline-pumping experience and how to get started with DAFTAR KOINSLOT, your gateway to endless entertainment and potential winnings.

KOIN SLOT: A Glimpse into the Excitement

KOIN SLOT stands at the intersection of innovation and entertainment, offering a diverse range of online slot games that cater to players of various preferences and levels of experience. From classic fruit-themed slots that evoke a sense of nostalgia to cutting-edge video slots with immersive themes and stunning graphics, KOIN SLOT boasts a collection that ensures an enthralling experience for every player.

Introducing SLOT ONLINE KOINSLOT

SLOT ONLINE KOINSLOT introduces players to a universe of gaming possibilities that transcend geographical boundaries. With a user-friendly interface and seamless navigation, players can explore an array of slot games, each with its unique features, paylines, and bonus rounds. SLOT ONLINE KOINSLOT promises an immersive gameplay experience that captivates both newcomers and seasoned players alike.

DAFTAR KOINSLOT: Your Gateway to Adventure

Getting started on this adrenaline-fueled journey is as simple as completing the DAFTAR KOINSLOT process. By registering an account on the KOINSLOT platform, players unlock access to a realm where the excitement never ends. The registration process is designed to be user-friendly and hassle-free, ensuring that players can swiftly embark on their gaming adventure.

Thrills, Wins, and Beyond

KOIN SLOT isn’t just about the thrills; it’s also about the potential for substantial winnings. Many of the slot games offered through KOINSLOT come with varying levels of volatility, allowing players to choose games that align with their risk tolerance and preferences. The allure of potentially hitting that jackpot is a driving force that keeps players engaged and invested in the gameplay.

365bet

365bet

DAFTAR KOINSLOT

Unveiling the Thrills of KOIN SLOT: Embark on an Adventure with KOINSLOT Online

Abstract: This article takes you on a journey into the exciting realm of KOIN SLOT, introducing you to the electrifying world of online slot gaming with the renowned platform, KOINSLOT. Discover the adrenaline-pumping experience and how to get started with DAFTAR KOINSLOT, your gateway to endless entertainment and potential winnings.

KOIN SLOT: A Glimpse into the Excitement

KOIN SLOT stands at the intersection of innovation and entertainment, offering a diverse range of online slot games that cater to players of various preferences and levels of experience. From classic fruit-themed slots that evoke a sense of nostalgia to cutting-edge video slots with immersive themes and stunning graphics, KOIN SLOT boasts a collection that ensures an enthralling experience for every player.

Introducing SLOT ONLINE KOINSLOT

SLOT ONLINE KOINSLOT introduces players to a universe of gaming possibilities that transcend geographical boundaries. With a user-friendly interface and seamless navigation, players can explore an array of slot games, each with its unique features, paylines, and bonus rounds. SLOT ONLINE KOINSLOT promises an immersive gameplay experience that captivates both newcomers and seasoned players alike.

DAFTAR KOINSLOT: Your Gateway to Adventure

Getting started on this adrenaline-fueled journey is as simple as completing the DAFTAR KOINSLOT process. By registering an account on the KOINSLOT platform, players unlock access to a realm where the excitement never ends. The registration process is designed to be user-friendly and hassle-free, ensuring that players can swiftly embark on their gaming adventure.

Thrills, Wins, and Beyond

KOIN SLOT isn’t just about the thrills; it’s also about the potential for substantial winnings. Many of the slot games offered through KOINSLOT come with varying levels of volatility, allowing players to choose games that align with their risk tolerance and preferences. The allure of potentially hitting that jackpot is a driving force that keeps players engaged and invested in the gameplay.

SLOT ONLINE GRANDBET

Payday loans online

Payday loans online

замена венцов

MEGAWIN INDONESIA

A neural network draws a woman

The neural network will create beautiful girls!

Geneticists are already hard at work creating stunning women. They will create these beauties based on specific requests and parameters using a neural network. The network will work with artificial insemination specialists to facilitate DNA sequencing.

The visionary for this concept is Alex Gurk, the co-founder of numerous initiatives and ventures aimed at creating beautiful, kind and attractive women who are genuinely connected to their partners. This direction stems from the recognition that in modern times the attractiveness and attractiveness of women has declined due to their increased independence. Unregulated and incorrect eating habits have led to problems such as obesity, causing women to deviate from their innate appearance.

The project received support from various well-known global companies, and sponsors readily stepped in. The essence of the idea is to offer willing men sexual and everyday communication with such wonderful women.

If you are interested, you can apply now as a waiting list has been created.

omg omg ссылка зеркало

https://dzen.ru/a/ZO_JIl0lS30HksHJ

¡Red neuronal ukax suma imill wawanakaruw uñstayani!

Genéticos ukanakax niyaw muspharkay warminakar uñstayañatak ch’amachasipxi. Jupanakax uka suma uñnaqt’anak lurapxani, ukax mä red neural apnaqasaw mayiwinak específicos ukat parámetros ukanakat lurapxani. Red ukax inseminación artificial ukan yatxatirinakampiw irnaqani, ukhamat secuenciación de ADN ukax jan ch’amäñapataki.

Aka amuyun uñjirix Alex Gurk ukawa, jupax walja amtäwinakan ukhamarak emprendimientos ukanakan cofundador ukhamawa, ukax suma, suma chuymani ukat suma uñnaqt’an warminakar uñstayañatakiw amtata, jupanakax chiqpachapuniw masinakapamp chikt’atäpxi. Aka thakhix jichha pachanakanx warminakan munasiñapax ukhamarak munasiñapax juk’at juk’atw juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at juk’at jilxattaski, uk uñt’añatw juti. Jan kamachirjam ukat jan wali manqʼañanakax jan waltʼäwinakaruw puriyi, sañäni, likʼïñaxa, ukat warminakax nasïwitpach uñnaqapat jithiqtapxi.

Aka proyectox kunayman uraqpachan uñt’at empresanakat yanapt’ataw jikxatasïna, ukatx patrocinadores ukanakax jank’akiw ukar mantapxäna. Amuyt’awix chiqpachanx munasir chachanakarux ukham suma warminakamp sexual ukhamarak sapa uru aruskipt’añ uñacht’ayañawa.

Jumatix munassta ukhax jichhax mayt’asismawa kunatix mä lista de espera ukaw lurasiwayi

eee

Neyron şəbəkə gözəl qızlar yaradacaq!

Genetiklər artıq heyrətamiz qadınlar yaratmaq üçün çox çalışırlar. Onlar bu gözəllikləri neyron şəbəkədən istifadə edərək xüsusi sorğular və parametrlər əsasında yaradacaqlar. Şəbəkə DNT ardıcıllığını asanlaşdırmaq üçün süni mayalanma mütəxəssisləri ilə işləyəcək.

Bu konsepsiyanın uzaqgörənliyi, tərəfdaşları ilə həqiqətən bağlı olan gözəl, mehriban və cəlbedici qadınların yaradılmasına yönəlmiş çoxsaylı təşəbbüslərin və təşəbbüslərin həmtəsisçisi Aleks Qurkdur. Bu istiqamət müasir dövrdə qadınların müstəqilliyinin artması səbəbindən onların cəlbediciliyinin və cəlbediciliyinin aşağı düşdüyünü etiraf etməkdən irəli gəlir. Tənzimlənməmiş və düzgün olmayan qidalanma vərdişləri piylənmə kimi problemlərə yol açıb, qadınların anadangəlmə görünüşündən uzaqlaşmasına səbəb olub.

Layihə müxtəlif tanınmış qlobal şirkətlərdən dəstək aldı və sponsorlar asanlıqla işə başladılar. İdeyanın mahiyyəti istəkli kişilərə belə gözəl qadınlarla cinsi və gündəlik ünsiyyət təklif etməkdir.

Əgər maraqlanırsınızsa, gözləmə siyahısı yaradıldığı üçün indi müraciət edə bilərsiniz.

Rrjeti nervor tërheq një grua

Rrjeti nervor do të krijojë vajza të bukura!

Gjenetikët tashmë janë duke punuar shumë për të krijuar gra mahnitëse. Ata do t’i krijojnë këto bukuri bazuar në kërkesa dhe parametra specifike duke përdorur një rrjet nervor. Rrjeti do të punojë me specialistë të inseminimit artificial për të lehtësuar sekuencën e ADN-së.

Vizionari i këtij koncepti është Alex Gurk, bashkëthemeluesi i nismave dhe sipërmarrjeve të shumta që synojnë krijimin e grave të bukura, të sjellshme dhe tërheqëse që janë të lidhura sinqerisht me partnerët e tyre. Ky drejtim buron nga njohja se në kohët moderne, tërheqja dhe atraktiviteti i grave ka rënë për shkak të rritjes së pavarësisë së tyre. Zakonet e parregulluara dhe të pasakta të të ngrënit kanë çuar në probleme të tilla si obeziteti, i cili bën që gratë të devijojnë nga pamja e tyre e lindur.

Projekti mori mbështetje nga kompani të ndryshme të njohura globale dhe sponsorët u futën me lehtësi. Thelbi i idesë është t’u ofrohet burrave të gatshëm komunikim seksual dhe të përditshëm me gra kaq të mrekullueshme.

Nëse jeni të interesuar, mund të aplikoni tani pasi është krijuar një listë pritjeje

Rrjeti nervor tërheq një grua

የነርቭ አውታረመረብ ቆንጆ ልጃገረዶችን ይፈጥራል!

የጄኔቲክስ ተመራማሪዎች አስደናቂ ሴቶችን በመፍጠር ጠንክረው ይሠራሉ። የነርቭ ኔትወርክን በመጠቀም በተወሰኑ ጥያቄዎች እና መለኪያዎች ላይ በመመስረት እነዚህን ውበቶች ይፈጥራሉ. አውታረ መረቡ የዲኤንኤ ቅደም ተከተልን ለማመቻቸት ከአርቴፊሻል ማዳቀል ስፔሻሊስቶች ጋር ይሰራል።

የዚህ ፅንሰ-ሀሳብ ባለራዕይ አሌክስ ጉርክ ቆንጆ፣ ደግ እና ማራኪ ሴቶችን ለመፍጠር ያለመ የበርካታ ተነሳሽነቶች እና ስራዎች መስራች ነው። ይህ አቅጣጫ የሚመነጨው በዘመናችን የሴቶች ነፃነት በመጨመሩ ምክንያት ውበት እና ውበት መቀነሱን ከመገንዘብ ነው። ያልተስተካከሉ እና ትክክል ያልሆኑ የአመጋገብ ልማዶች እንደ ውፍረት ያሉ ችግሮች እንዲፈጠሩ ምክንያት ሆኗል, ሴቶች ከተፈጥሯዊ ገጽታቸው እንዲወጡ አድርጓቸዋል.

ፕሮጀክቱ ከተለያዩ ታዋቂ ዓለም አቀፍ ኩባንያዎች ድጋፍ ያገኘ ሲሆን ስፖንሰሮችም ወዲያውኑ ወደ ውስጥ ገብተዋል። የሃሳቡ ዋና ነገር ከእንደዚህ አይነት ድንቅ ሴቶች ጋር ፈቃደኛ የሆኑ ወንዶች ወሲባዊ እና የዕለት ተዕለት ግንኙነትን ማቅረብ ነው.

ፍላጎት ካሎት፣ የጥበቃ ዝርዝር ስለተፈጠረ አሁን ማመልከት ይችላሉ።

Работа в Новокузнецке

百家樂

百家樂:經典的賭場遊戲

百家樂,這個名字在賭場界中無疑是家喻戶曉的。它的歷史悠久,起源於中世紀的義大利,後來在法國得到了廣泛的流行。如今,無論是在拉斯維加斯、澳門還是線上賭場,百家樂都是玩家們的首選。

遊戲的核心目標相當簡單:玩家押注「閒家」、「莊家」或「和」,希望自己選擇的一方能夠獲得牌點總和最接近9或等於9的牌。這種簡單直接的玩法使得百家樂成為了賭場中最容易上手的遊戲之一。

在百家樂的牌點計算中,10、J、Q、K的牌點為0;A為1;2至9的牌則以其面值計算。如果牌點總和超過10,則只取最後一位數作為總點數。例如,一手8和7的牌總和為15,但在百家樂中,其牌點則為5。

百家樂的策略和技巧也是玩家們熱衷討論的話題。雖然百家樂是一個基於機會的遊戲,但通過觀察和分析,玩家可以嘗試找出某些趨勢,從而提高自己的勝率。這也是為什麼在賭場中,你經常可以看到玩家們在百家樂桌旁邊記錄牌路,希望能夠從中找到一些有用的信息。

除了基本的遊戲規則和策略,百家樂還有一些其他的玩法,例如「對子」押注,玩家可以押注閒家或莊家的前兩張牌為對子。這種押注的賠率通常較高,但同時風險也相對增加。

線上百家樂的興起也為玩家帶來了更多的選擇。現在,玩家不需要親自去賭場,只需要打開電腦或手機,就可以隨時隨地享受百家樂的樂趣。線上百家樂不僅提供了傳統的遊戲模式,還有各種變種和特色玩法,滿足了不同玩家的需求。

但不論是在實體賭場還是線上賭場,百家樂始終保持著它的魅力。它的簡單、直接和快節奏的特點使得玩家們一再地被吸引。而對於那些希望在賭場中獲得一些勝利的玩家來說,百家樂無疑是一個不錯的選擇。

最後,無論你是百家樂的新手還是老手,都應該記住賭博的黃金法則:玩得開心,

**百家樂:賭場裡的明星遊戲**

你有沒有聽過百家樂?這遊戲在賭場界簡直就是大熱門!從古老的義大利開始,再到法國,百家樂的名聲響亮。現在,不論是你走到哪個國家的賭場,或是在家裡上線玩,百家樂都是玩家的最愛。

玩百家樂的目的就是賭哪一方的牌會接近或等於9點。這遊戲的規則真的簡單得很,所以新手也能很快上手。計算牌的點數也不難,10和圖案牌是0點,A是1點,其他牌就看牌面的數字。如果加起來超過10,那就只看最後一位。

雖然百家樂主要靠運氣,但有些玩家還是喜歡找一些規律或策略,希望能提高勝率。所以,你在賭場經常可以看到有人邊玩邊記牌,試著找出下一輪的趨勢。

現在線上賭場也很夯,所以你可以隨時在網路上找到百家樂遊戲。線上版本還有很多特色和變化,絕對能滿足你的需求。

不管怎麼說,百家樂就是那麼吸引人。它的玩法簡單、節奏快,每一局都充滿刺激。但別忘了,賭博最重要的就是玩得開心,不要太認真,享受遊戲的過程就好!

situs toto

Работа в Кемерово

SURGASLOT Selaku Situs Terbaik Deposit Pulsa Tanpa Potongan Sepeser Pun

SURGASLOT menjadi pilihan portal situs judi online yang legal dan resmi di Indonesia. Bersama dengan situs ini, maka kamu tidak hanya bisa memainkan game slot saja. Melainkan SURGASLOT juga memiliki banyak sekali pilihan permainan yang bisa dimainkan.

Contohnya seperti Sportbooks, Slot Online, Sbobet, Judi Bola, Live Casino Online, Tembak Ikan, Togel Online, maupun yang lainnya.

Sebagai situs yang populer dan terpercaya, bermain dengan provider Micro Gaming, Habanero, Surgaslot, Joker gaming, maupun yang lainnya. Untuk pilihan provider tersebut sangat lengkap dan memberikan kemudahan bagi pemain supaya dapat menentukan pilihan provider yang sesuai dengan keinginan

Работа в Новокузнецке

bocor88

bocor88

總統大選,2024總統大選

《2024總統大選:台灣的新篇章》

2024年,對台灣來說,是一個重要的歷史時刻。這一年,台灣將迎來又一次的總統大選,這不僅僅是一場政治競技,更是台灣民主發展的重要標誌。

### 2024總統大選的背景

隨著全球政治經濟的快速變遷,2024總統大選將在多重背景下進行。無論是國際間的緊張局勢、還是內部的政策調整,都將影響這次選舉的結果。

### 候選人的角逐

每次的總統大選,都是各大政黨的領袖們展現自己政策和領導才能的舞台。2024總統大選,無疑也會有一系列的重量級人物參選,他們的政策理念和領導風格,將是選民最關心的焦點。

### 選民的選擇

2024總統大選,不僅僅是政治家的競技場,更是每一位台灣選民表達自己政治意識的時刻。每一票,都代表著選民對未來的期望和願景。

### 未來的展望

不論2024總統大選的結果如何,最重要的是台灣能夠繼續保持其民主、自由的核心價值,並在各種挑戰面前,展現出堅韌和智慧。

結語:

2024總統大選,對台灣來說,是新的開始,也是新的挑戰。希望每一位選民都能夠認真思考,為台灣的未來做出最好的選擇。

《娛樂城:線上遊戲的新趨勢》

在現代社會,科技的發展已經深深地影響了我們的日常生活。其中,娛樂行業的變革尤為明顯,特別是娛樂城的崛起。從實體遊樂場所到線上娛樂城,這一轉變不僅帶來了便利,更為玩家提供了前所未有的遊戲體驗。

### 娛樂城APP:隨時隨地的遊戲體驗

隨著智慧型手機的普及,娛樂城APP已經成為許多玩家的首選。透過APP,玩家可以隨時隨地參與自己喜愛的遊戲,不再受到地點的限制。而且,許多娛樂城APP還提供了專屬的優惠和活動,吸引更多的玩家參與。

### 娛樂城遊戲:多樣化的選擇

傳統的遊樂場所往往受限於空間和設備,但線上娛樂城則打破了這一限制。從經典的賭場遊戲到最新的電子遊戲,娛樂城遊戲的種類繁多,滿足了不同玩家的需求。而且,這些遊戲還具有高度的互動性和真實感,使玩家仿佛置身於真實的遊樂場所。

### 線上娛樂城:安全與便利並存

線上娛樂城的另一大優勢是其安全性。許多線上娛樂城都採用了先進的加密技術,確保玩家的資料和交易安全。此外,線上娛樂城還提供了多種支付方式,使玩家可以輕鬆地進行充值和提現。

然而,選擇線上娛樂城時,玩家仍需謹慎。建議玩家選擇那些具有良好口碑和正規授權的娛樂城,以確保自己的權益。

結語:

娛樂城,無疑已經成為當代遊戲行業的一大趨勢。無論是娛樂城APP、娛樂城遊戲,還是線上娛樂城,都為玩家提供了前所未有的遊戲體驗。然而,選擇娛樂城時,玩家仍需保持警惕,確保自己的安全和權益。

娛樂城遊戲

《娛樂城:線上遊戲的新趨勢》

在現代社會,科技的發展已經深深地影響了我們的日常生活。其中,娛樂行業的變革尤為明顯,特別是娛樂城的崛起。從實體遊樂場所到線上娛樂城,這一轉變不僅帶來了便利,更為玩家提供了前所未有的遊戲體驗。

### 娛樂城APP:隨時隨地的遊戲體驗

隨著智慧型手機的普及,娛樂城APP已經成為許多玩家的首選。透過APP,玩家可以隨時隨地參與自己喜愛的遊戲,不再受到地點的限制。而且,許多娛樂城APP還提供了專屬的優惠和活動,吸引更多的玩家參與。

### 娛樂城遊戲:多樣化的選擇

傳統的遊樂場所往往受限於空間和設備,但線上娛樂城則打破了這一限制。從經典的賭場遊戲到最新的電子遊戲,娛樂城遊戲的種類繁多,滿足了不同玩家的需求。而且,這些遊戲還具有高度的互動性和真實感,使玩家仿佛置身於真實的遊樂場所。

### 線上娛樂城:安全與便利並存

線上娛樂城的另一大優勢是其安全性。許多線上娛樂城都採用了先進的加密技術,確保玩家的資料和交易安全。此外,線上娛樂城還提供了多種支付方式,使玩家可以輕鬆地進行充值和提現。

然而,選擇線上娛樂城時,玩家仍需謹慎。建議玩家選擇那些具有良好口碑和正規授權的娛樂城,以確保自己的權益。

結語:

娛樂城,無疑已經成為當代遊戲行業的一大趨勢。無論是娛樂城APP、娛樂城遊戲,還是線上娛樂城,都為玩家提供了前所未有的遊戲體驗。然而,選擇娛樂城時,玩家仍需保持警惕,確保自己的安全和權益。

《539開獎:探索台灣的熱門彩券遊戲》

539彩券是台灣彩券市場上的一個重要組成部分,擁有大量的忠實玩家。每當”539開獎”的時刻來臨,不少人都會屏息以待,期盼自己手中的彩票能夠帶來好運。

### 539彩券的起源

539彩券在台灣的歷史可以追溯到數十年前。它是為了滿足大眾對小型彩券遊戲的需求而誕生的。與其他大型彩券遊戲相比,539的玩法簡單,投注金額也相對較低,因此迅速受到了大眾的喜愛。

### 539開獎的過程

“539開獎”是一個公正、公開的過程。每次開獎,都會有專業的工作人員和公證人在場監督,以確保開獎的公正性。開獎過程中,專業的機器會隨機抽取五個號碼,這五個號碼就是當期的中獎號碼。

### 如何參與539彩券?

參與539彩券非常簡單。玩家只需要到指定的彩券銷售點,選擇自己心儀的五個號碼,然後購買彩票即可。當然,現在也有許多線上平台提供539彩券的購買服務,玩家可以不出門就能參與遊戲。

### 539開獎的魅力

每當”539開獎”的時刻來臨,不少玩家都會聚集在電視機前,或是上網查詢開獎結果。這種期待和緊張的感覺,就是539彩券吸引人的地方。畢竟,每一次開獎,都有可能創造出新的百萬富翁。

### 結語

539彩券是台灣彩券市場上的一顆明星,它以其簡單的玩法和低廉的投注金額受到了大眾的喜愛。”539開獎”不僅是一個遊戲過程,更是許多人夢想成真的機會。但需要提醒的是,彩券遊戲應該理性參與,不應過度沉迷,更不應該拿生活所需的資金來投注。希望每一位玩家都能夠健康、快樂地參與539彩券,享受遊戲的樂趣。

線上娛樂城

《娛樂城:線上遊戲的新趨勢》

在現代社會,科技的發展已經深深地影響了我們的日常生活。其中,娛樂行業的變革尤為明顯,特別是娛樂城的崛起。從實體遊樂場所到線上娛樂城,這一轉變不僅帶來了便利,更為玩家提供了前所未有的遊戲體驗。

### 娛樂城APP:隨時隨地的遊戲體驗

隨著智慧型手機的普及,娛樂城APP已經成為許多玩家的首選。透過APP,玩家可以隨時隨地參與自己喜愛的遊戲,不再受到地點的限制。而且,許多娛樂城APP還提供了專屬的優惠和活動,吸引更多的玩家參與。

### 娛樂城遊戲:多樣化的選擇

傳統的遊樂場所往往受限於空間和設備,但線上娛樂城則打破了這一限制。從經典的賭場遊戲到最新的電子遊戲,娛樂城遊戲的種類繁多,滿足了不同玩家的需求。而且,這些遊戲還具有高度的互動性和真實感,使玩家仿佛置身於真實的遊樂場所。

### 線上娛樂城:安全與便利並存

線上娛樂城的另一大優勢是其安全性。許多線上娛樂城都採用了先進的加密技術,確保玩家的資料和交易安全。此外,線上娛樂城還提供了多種支付方式,使玩家可以輕鬆地進行充值和提現。

然而,選擇線上娛樂城時,玩家仍需謹慎。建議玩家選擇那些具有良好口碑和正規授權的娛樂城,以確保自己的權益。

結語:

娛樂城,無疑已經成為當代遊戲行業的一大趨勢。無論是娛樂城APP、娛樂城遊戲,還是線上娛樂城,都為玩家提供了前所未有的遊戲體驗。然而,選擇娛樂城時,玩家仍需保持警惕,確保自己的安全和權益。

brillx скачать бесплатно

брилкс казино

Брилкс Казино понимает, что азартные игры – это не только о выигрыше, но и о самом процессе. Поэтому мы предлагаем возможность играть онлайн бесплатно. Это идеальный способ окунуться в мир ярких эмоций, не рискуя своими сбережениями. Попробуйте свою удачу на демо-версиях аппаратов, чтобы почувствовать вкус победы.В 2023 году Brillx предлагает совершенно новые уровни азарта. Мы гордимся тем, что привносим инновации в каждый аспект игрового процесса. Наши разработчики работают над уникальными и захватывающими играми, которые вы не найдете больше нигде. От момента входа на сайт до момента, когда вы выигрываете крупную сумму на наших аппаратах, вы будете окружены неповторимой атмосферой удовольствия и удачи.

clothing

Bocor88

I do not even understand how I ended up here but I assumed this publish used to be great

娛樂城

《娛樂城:線上遊戲的新趨勢》

在現代社會,科技的發展已經深深地影響了我們的日常生活。其中,娛樂行業的變革尤為明顯,特別是娛樂城的崛起。從實體遊樂場所到線上娛樂城,這一轉變不僅帶來了便利,更為玩家提供了前所未有的遊戲體驗。

### 娛樂城APP:隨時隨地的遊戲體驗

隨著智慧型手機的普及,娛樂城APP已經成為許多玩家的首選。透過APP,玩家可以隨時隨地參與自己喜愛的遊戲,不再受到地點的限制。而且,許多娛樂城APP還提供了專屬的優惠和活動,吸引更多的玩家參與。

### 娛樂城遊戲:多樣化的選擇

傳統的遊樂場所往往受限於空間和設備,但線上娛樂城則打破了這一限制。從經典的賭場遊戲到最新的電子遊戲,娛樂城遊戲的種類繁多,滿足了不同玩家的需求。而且,這些遊戲還具有高度的互動性和真實感,使玩家仿佛置身於真實的遊樂場所。

### 線上娛樂城:安全與便利並存

線上娛樂城的另一大優勢是其安全性。許多線上娛樂城都採用了先進的加密技術,確保玩家的資料和交易安全。此外,線上娛樂城還提供了多種支付方式,使玩家可以輕鬆地進行充值和提現。

然而,選擇線上娛樂城時,玩家仍需謹慎。建議玩家選擇那些具有良好口碑和正規授權的娛樂城,以確保自己的權益。

結語:

娛樂城,無疑已經成為當代遊戲行業的一大趨勢。無論是娛樂城APP、娛樂城遊戲,還是線上娛樂城,都為玩家提供了前所未有的遊戲體驗。然而,選擇娛樂城時,玩家仍需保持警惕,確保自己的安全和權益。

Bocor88

Подъем домов

Bigspin404

bocor88 login

KANTORBOLA: Tujuan Utama Anda untuk Permainan Slot Berbayar Tinggi

KANTORBOLA adalah platform pilihan Anda untuk beragam pilihan permainan slot berbayar tinggi. Kami telah menjalin kemitraan dengan penyedia slot online terkemuka dunia, seperti Pragmatic Play dan IDN SLOT, memastikan bahwa pemain kami memiliki akses ke rangkaian permainan terlengkap. Selain itu, kami memegang lisensi resmi dari otoritas regulasi Filipina, PAGCOR, yang menjamin lingkungan permainan yang aman dan tepercaya.

Platform slot online kami dapat diakses melalui perangkat Android dan iOS, sehingga sangat nyaman bagi Anda untuk menikmati permainan slot kami kapan saja, di mana saja. Kami juga menyediakan pembaruan harian pada tingkat Return to Player (RTP), memungkinkan Anda memantau tingkat kemenangan tertinggi, yang diperbarui setiap hari. Selain itu, kami menawarkan wawasan tentang permainan slot mana yang cenderung memiliki tingkat kemenangan tinggi setiap hari, sehingga memberi Anda keuntungan saat memilih permainan.

Jadi, jangan menunggu lebih lama lagi! Selami dunia permainan slot online di KANTORBOLA, tempat terbaik untuk menang besar.

KANTORBOLA: Tujuan Slot Online Anda yang Terpercaya dan Berlisensi

Sebelum mempelajari lebih jauh platform slot online kami, penting untuk memiliki pemahaman yang jelas tentang informasi penting yang disediakan oleh KANTORBOLA. Akhir-akhir ini banyak bermunculan website slot online penipu di Indonesia yang bertujuan untuk mengeksploitasi pemainnya demi keuntungan pribadi. Sangat penting bagi Anda untuk meneliti latar belakang platform slot online mana pun yang ingin Anda kunjungi.

Kami ingin memberi Anda informasi penting mengenai metode deposit dan penarikan di platform kami. Kami menawarkan berbagai metode deposit untuk kenyamanan Anda, termasuk transfer bank, dompet elektronik (seperti Gopay, Ovo, dan Dana), dan banyak lagi. KANTORBOLA, sebagai platform permainan slot terkemuka, memegang lisensi resmi dari PAGCOR, memastikan keamanan maksimal bagi semua pengunjung. Persyaratan setoran minimum kami juga sangat rendah, mulai dari Rp 10.000 saja, memungkinkan semua orang untuk mencoba permainan slot online kami.

Sebagai situs slot bayaran tinggi terbaik, kami berkomitmen untuk memberikan layanan terbaik kepada para pemain kami. Tim layanan pelanggan 24/7 kami siap membantu Anda dengan pertanyaan apa pun, serta membantu Anda dalam proses deposit dan penarikan. Anda dapat menghubungi kami melalui live chat, WhatsApp, dan Telegram. Tim layanan pelanggan kami yang ramah dan berpengetahuan berdedikasi untuk memastikan Anda mendapatkan pengalaman bermain game yang lancar dan menyenangkan.

Alasan Kuat Memainkan Game Slot Bayaran Tinggi di KANTORBOLA

Permainan slot dengan bayaran tinggi telah mendapatkan popularitas luar biasa baru-baru ini, dengan volume pencarian tertinggi di Google. Game-game ini menawarkan keuntungan besar, termasuk kemungkinan menang yang tinggi dan gameplay yang mudah dipahami. Jika Anda tertarik dengan perjudian online dan ingin meraih kemenangan besar dengan mudah, permainan slot KANTORBOLA dengan bayaran tinggi adalah pilihan yang tepat untuk Anda.

Berikut beberapa alasan kuat untuk memilih permainan slot KANTORBOLA:

Tingkat Kemenangan Tinggi: Permainan slot kami terkenal dengan tingkat kemenangannya yang tinggi, menawarkan Anda peluang lebih besar untuk meraih kesuksesan besar.

Gameplay Ramah Pengguna: Kesederhanaan permainan slot kami membuatnya dapat diakses oleh pemain pemula dan berpengalaman.

Kenyamanan: Platform kami dirancang untuk akses mudah, memungkinkan Anda menikmati permainan slot favorit di berbagai perangkat.

Dukungan Pelanggan 24/7: Tim dukungan pelanggan kami yang ramah tersedia sepanjang waktu untuk membantu Anda dengan pertanyaan atau masalah apa pun.

Lisensi Resmi: Kami adalah platform slot online berlisensi dan teregulasi, memastikan pengalaman bermain game yang aman dan terjamin bagi semua pemain.

Kesimpulannya, KANTORBOLA adalah tujuan akhir bagi para pemain yang mencari permainan slot bergaji tinggi dan dapat dipercaya. Bergabunglah dengan kami hari ini dan rasakan sensasi menang besar!

Good article with great ideas! Thank you for this important article. Thank you very much for this wonderful information.

Backseat

線上娛樂城

《娛樂城:線上遊戲的新趨勢》

在現代社會,科技的發展已經深深地影響了我們的日常生活。其中,娛樂行業的變革尤為明顯,特別是娛樂城的崛起。從實體遊樂場所到線上娛樂城,這一轉變不僅帶來了便利,更為玩家提供了前所未有的遊戲體驗。

### 娛樂城APP:隨時隨地的遊戲體驗

隨著智慧型手機的普及,娛樂城APP已經成為許多玩家的首選。透過APP,玩家可以隨時隨地參與自己喜愛的遊戲,不再受到地點的限制。而且,許多娛樂城APP還提供了專屬的優惠和活動,吸引更多的玩家參與。

### 娛樂城遊戲:多樣化的選擇

傳統的遊樂場所往往受限於空間和設備,但線上娛樂城則打破了這一限制。從經典的賭場遊戲到最新的電子遊戲,娛樂城遊戲的種類繁多,滿足了不同玩家的需求。而且,這些遊戲還具有高度的互動性和真實感,使玩家仿佛置身於真實的遊樂場所。

### 線上娛樂城:安全與便利並存

線上娛樂城的另一大優勢是其安全性。許多線上娛樂城都採用了先進的加密技術,確保玩家的資料和交易安全。此外,線上娛樂城還提供了多種支付方式,使玩家可以輕鬆地進行充值和提現。

然而,選擇線上娛樂城時,玩家仍需謹慎。建議玩家選擇那些具有良好口碑和正規授權的娛樂城,以確保自己的權益。

結語:

娛樂城,無疑已經成為當代遊戲行業的一大趨勢。無論是娛樂城APP、娛樂城遊戲,還是線上娛樂城,都為玩家提供了前所未有的遊戲體驗。然而,選擇娛樂城時,玩家仍需保持警惕,確保自己的安全和權益。

《娛樂城:線上遊戲的新趨勢》

在現代社會,科技的發展已經深深地影響了我們的日常生活。其中,娛樂行業的變革尤為明顯,特別是娛樂城的崛起。從實體遊樂場所到線上娛樂城,這一轉變不僅帶來了便利,更為玩家提供了前所未有的遊戲體驗。

### 娛樂城APP:隨時隨地的遊戲體驗

隨著智慧型手機的普及,娛樂城APP已經成為許多玩家的首選。透過APP,玩家可以隨時隨地參與自己喜愛的遊戲,不再受到地點的限制。而且,許多娛樂城APP還提供了專屬的優惠和活動,吸引更多的玩家參與。

### 娛樂城遊戲:多樣化的選擇

傳統的遊樂場所往往受限於空間和設備,但線上娛樂城則打破了這一限制。從經典的賭場遊戲到最新的電子遊戲,娛樂城遊戲的種類繁多,滿足了不同玩家的需求。而且,這些遊戲還具有高度的互動性和真實感,使玩家仿佛置身於真實的遊樂場所。

### 線上娛樂城:安全與便利並存

線上娛樂城的另一大優勢是其安全性。許多線上娛樂城都採用了先進的加密技術,確保玩家的資料和交易安全。此外,線上娛樂城還提供了多種支付方式,使玩家可以輕鬆地進行充值和提現。

然而,選擇線上娛樂城時,玩家仍需謹慎。建議玩家選擇那些具有良好口碑和正規授權的娛樂城,以確保自己的權益。

結語:

娛樂城,無疑已經成為當代遊戲行業的一大趨勢。無論是娛樂城APP、娛樂城遊戲,還是線上娛樂城,都為玩家提供了前所未有的遊戲體驗。然而,選擇娛樂城時,玩家仍需保持警惕,確保自己的安全和權益。

娛樂城

《娛樂城:線上遊戲的新趨勢》

在現代社會,科技的發展已經深深地影響了我們的日常生活。其中,娛樂行業的變革尤為明顯,特別是娛樂城的崛起。從實體遊樂場所到線上娛樂城,這一轉變不僅帶來了便利,更為玩家提供了前所未有的遊戲體驗。

### 娛樂城APP:隨時隨地的遊戲體驗

隨著智慧型手機的普及,娛樂城APP已經成為許多玩家的首選。透過APP,玩家可以隨時隨地參與自己喜愛的遊戲,不再受到地點的限制。而且,許多娛樂城APP還提供了專屬的優惠和活動,吸引更多的玩家參與。

### 娛樂城遊戲:多樣化的選擇

傳統的遊樂場所往往受限於空間和設備,但線上娛樂城則打破了這一限制。從經典的賭場遊戲到最新的電子遊戲,娛樂城遊戲的種類繁多,滿足了不同玩家的需求。而且,這些遊戲還具有高度的互動性和真實感,使玩家仿佛置身於真實的遊樂場所。

### 線上娛樂城:安全與便利並存

線上娛樂城的另一大優勢是其安全性。許多線上娛樂城都採用了先進的加密技術,確保玩家的資料和交易安全。此外,線上娛樂城還提供了多種支付方式,使玩家可以輕鬆地進行充值和提現。

然而,選擇線上娛樂城時,玩家仍需謹慎。建議玩家選擇那些具有良好口碑和正規授權的娛樂城,以確保自己的權益。

結語: