The DATA wave in finance

Nasdaq, the second largest stock exchange market in the globe has invested in technology and web scraping financial data by acquisition of Quandal, one of the largest alternate data platforms.

The need to hold data insights have always been a norm in the financial industry, primarily to drive insights and make well-evaluated investment decisions. This is why financial institutions – hedge funds, banks, asset managers all hoard data to keep their big-buck bearing investment decisions data-backed. Though the sector well understands the need for information, be it for equity research analysis, venture capital investment, hedge funds management, asset management etc. they do not have the tools to scrape financial data and structure them in a format to draw valuable insights.

Why consider scraping in finance?

There are so many sources and forms in which data is available. Turns out, every bit of this is as important and can really contribute to making better decisions. For instance, look how hints of mergers and acquisition data can be identified by tracking CEO’s travel patterns as Kamel, CEO of Quandel rightly states the data significance.

“What we’re interested in doing is tracking corporate, private jets, most companies hide the identity of their corporate jets, but it’s possible to unmask them, researchers carefully watching websites like FlightAware.com could theoretically piece together flight records to figure out individual planes’ tail numbers”.

Tracking of volumes of information such as news, social media, satellite data, app data etc. through an automated process such as scraping can help financial companies gain a lot of valuable insights.

Another interesting example is the one where Goldman Sachs asset management was able to identify an increase in visitors to the HomeDepot.com website by scraping website traffic from alexa.com. This helped asset manager to buy the stock well in advance of the company raising its outlook and its stock eventually appreciating.

Web scraping in hedge funds

Hedge funds are an investment that carries some risk in the ROI and hence the need to rely on data to accommodate the nature of volatility in the hedge fund market. Web scraping stock data, however, will provide the investor’s information covering all angles – market forces, consumer behavior, competitive intelligence etc. that makes strategic decisions an easier process.

Going past the traditional methods like market data (earnings and macroeconomic data), a majority of the hedge fund managers are beginning to see the potential in alternate data such as information available in satellite imagery, geolocation, web scraping etc. The prowess of the web data is being increasingly recognized by the procurer of such data to unbox tremendous insights to have an informational advantage over the peers.

A hedge fund manager requires the assistance of web extraction to obtain these data sets from a third-party scraping service provider. Such data can be put to scrutiny by the data scientists partnering with portfolio managers to draw insights.

A huge part of web scraping for information that helps in efficient decision-making is dependent on the effectiveness in the financial structure and identification of the right data sets by the data scientists and the portfolio managers. Identification of alpha opportunities (a metric that represents the active returns on investment).

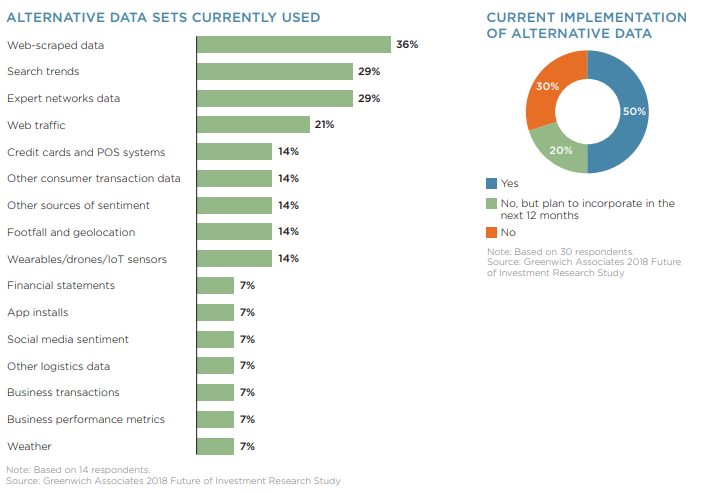

According to Greenwich / Thomson Reuters research the average investment firm is spending about $900,000 yearly on alternative data, and of this alternative data, clearly, the most popular form being used investment professionals is web-scraped data. Of all the methods in alternate data for hedge funds, web scraping is identified as the most effective methods.

What are the use cases of scraping in finance?

Equity research analysis

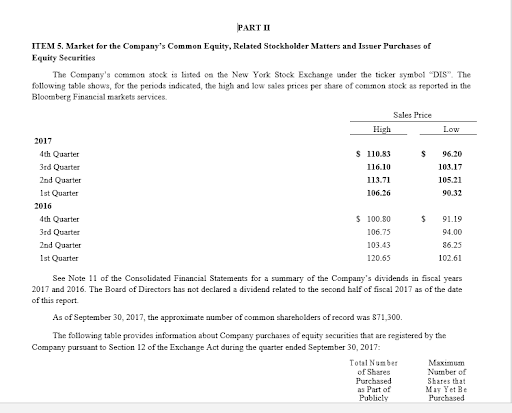

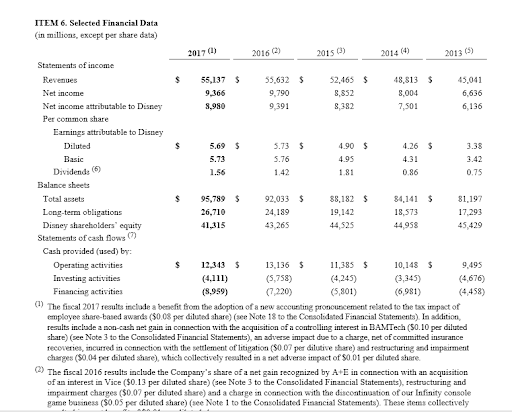

A huge investment decision requires an assessment of the financial position of the company in which you are intending to invest. Generally, the information needs to be gathered from the profit and loss statement, balance sheet and cash flow statements for numerous years. These numbers can be obtained through ratio analysis ( solvency and profitability ratios).

Now, these data are available on the websites in the investor relation sections ( most of the public limited companies have a dedicated page) and in the quarterly or annual reports. The information available on these sites and PDFs can be scraped to gain insights into the financial strength.

You can take a look at the investor relation page of Walt disney .

This type of data is also available in the EDGAR databases that hold annual reports and the filings are available for download or can be viewed for free.

Let’s quickly get to an example of sample code for web scraping financial statement reports (PDFs) from the Walt Disney website. These annual reports have tons of financial data points and extracting these data from annual reports or quarterly reports for several years will help in identifying a pattern and a thorough analysis of the same will help in making better-informed decisions.

Here’ a sample code to scrape out a critical piece – the balance sheet from the PDF document from Walt Disney.

import pyPdf def PDFtoWord(fileName): pages_extract = 3 pages = file(fileName, "rb") pdf = pyPdf.PdfFileReader(pages) for i in range(25, pages_extract): for line in pdf.getPage(i).extractText().splitlines(): yield line f=open('extractedreport.doc','w') for line in PDFtoWord('Report.pdf'): f.write(line) f.close()

This code is developed as a sample to scrape specific pages with financial data points from a PDF document with high volumes.

The output would look like this:

Scraping financial data and credit ratings

To assess the financial strength of borrowing entities for qualifying their ability to meet principal and interest payments. This information is particularly useful for the clients if such rating agencies like the institutional investors, banks, and insurance companies) to evaluate using near real-time updates. This type of data can be scraped from websites, Google Finance Pages, and Bloomberg Research.

Venture capital

Small businesses or start-ups require funding/investment form big businesses and hence the need to research the companies before investing. This kind of data is usually available in some websites that have information on profiling of new business and products like techcrunch and venturebeat.

Also, there are a ton of trends, technology and portfolio companies that are required to be monitored before making an investment decision. A solution like scraping will help in extracting and aggregating this data in a structured format to make a strategic venture capital decision.

Risk mitigation and compliance

Compliance with regulation is very important in the financial industry and these are put into great scrutiny leading to millions of dollars as a penalty and successive reformation cost as a consequence of a breach. Through automated monitoring, of sources that post regular updates – government regulations, court records, sanction lists etc you can effectively improve your compliance and risk management position.

Even if these sites are complex or difficult to access scraping helps in extracting regulatory updates to stay abreast of the happenings and identifying frauds.

Ditch internet surfing and use scraping instead.

Finance industry needs tons of crucial information to make strategic business decisions. Scraping financial data has been the ultimate solution for various use cases including venture capital, hedge funds, equity research analysis etc. The potential of scraping is immense and the volume and variety of data that scraping can give within a quick TAT is something every financial service provider should leverage upon.

Scrapeworks is architectured to scour the web data in the most fashionable and structured manner that can give information which can forever redefine the value of information the Internet has got.

You can set your parameters for the scraping requirements and we can deliver the data that you want.

Read through our customer stories to understand how we extracted crucial data points from company reports and financial statements for a leading news agency and extensive crawling and extraction of financial information for a leading financial services firm.

If you have a similar need, do get in touch with us.